2011-10-01

Abstract

This month's test proved another epic - not in terms of the number of products entered but rather in the time taken to get through them all. John Hawes reveals the details of the troublesome few and the better-behaved majority.

Copyright © 2011 Virus Bulletin

We reached something of a milestone this month, as shortly before this comparative got under way we announced some major changes to the way in which the VB100 will operate. With the recent unveiling by The WildList Organization of the Extended WildList – a significant expansion of the range of malware covered by the list – it was clear that some decision would have to be made as to how to incorporate the new sample set into our testing, and it seemed like a good time to roll in some more updates which have been in the pipeline for some time. As of the next test, we will be allowing all products access to the Internet for the bulk of our testing. This will allow us to measure the effectiveness of the ‘cloud’ lookup systems that more and more products are including these days, while for those yet to move in this direction it will also show how well more traditional local updates are kept up to date.

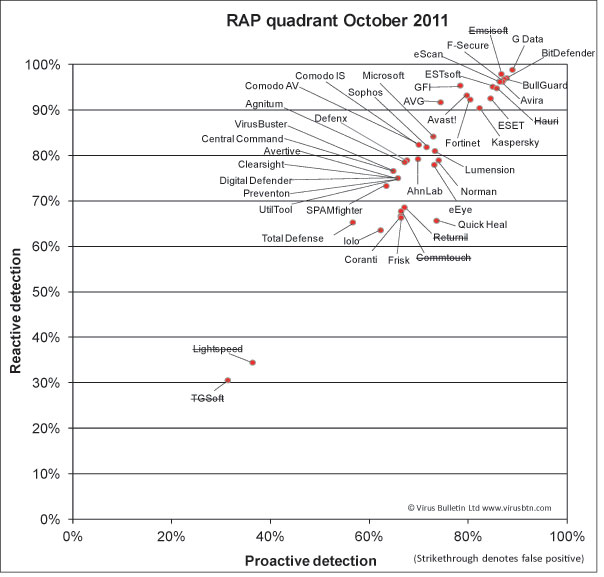

This change will affect our certification programme, with the core components – the WildList sample sets and our false positive testing – being run multiple times during the test period, and products required to achieve the same high standards we have always demanded on a continuous basis. We will also be adding some new sample sets which should more closely reflect the vendors’ ability to keep up with the latest threats, and will be running our speed and performance measures in a more realistic environment; we hope to add some new measures to the selection we currently report. As our RAP test includes a retrospective component which cannot be properly measured without denying products access to their latest updates, this part of the comparative will remain unchanged.

While we had hoped to include the new Extended WildList as part of the requirements for this month’s certification testing, it quickly became clear that a little more time was required for the new process to settle in, so it was decided that the new set of samples would be included this month as a trial only, with detection rates reported but not included as part of the certification requirements. As of the next test however, we will be requiring full coverage of the Extended list, making further demands on the products hoping to achieve certification.

With all this on the horizon, the current test is the last that will operate in the way in which the VB100 has been run more or less since its introduction in 1998 – at least as far as the certification component is concerned. The platform for this last test is the suitably traditional Windows Server 2003 which has been with us for quite some time. The server-grade sibling of the evergreen XP was first released in April 2003, with the R2 edition used in this test released in late 2005. Having visited the platform most years since its release, preparation for the test was fairly straightforward. The installation process is simple and relatively speedy, even with the addition of service packs to bring it up to the current minimum state, and after adding our standard suite of handy tools, installing the drivers required for networking and other basic functions, and adjusting the look and feel to suit our tastes, images were taken for the tests without undue complications. We were pleased to find the platform remains as stable and responsive as ever, with its footprint on disk considerably smaller than its more complicated successors.

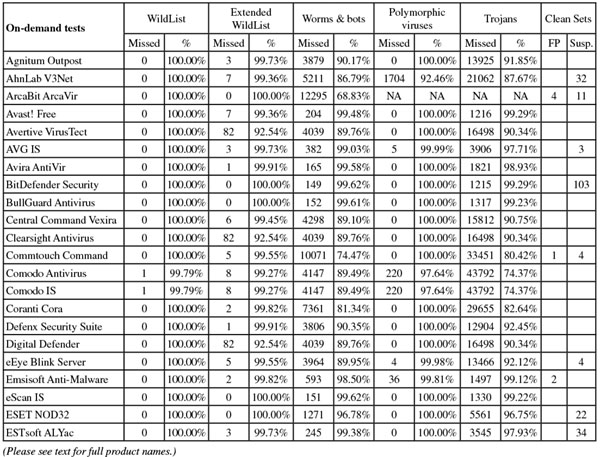

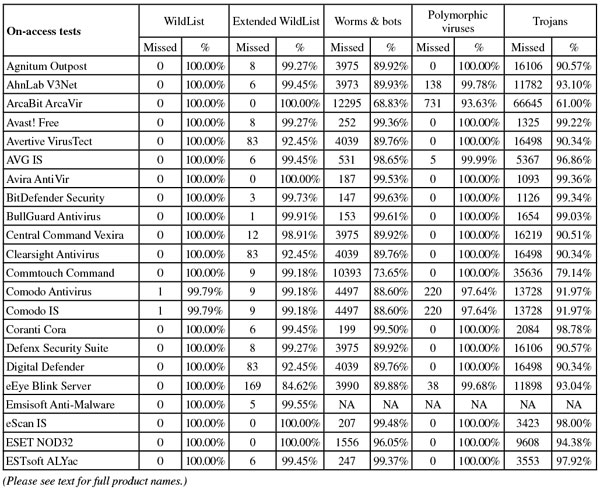

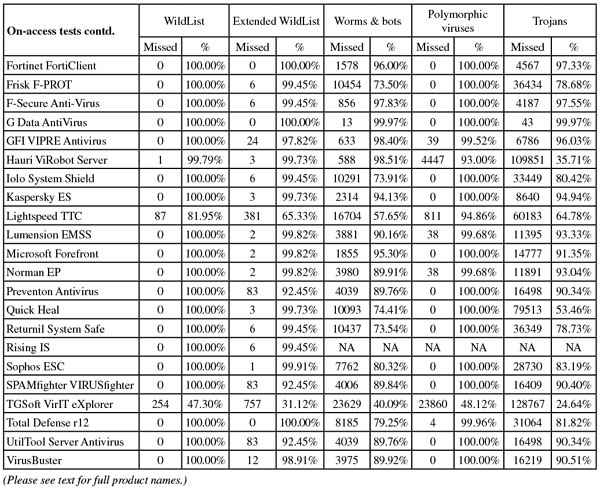

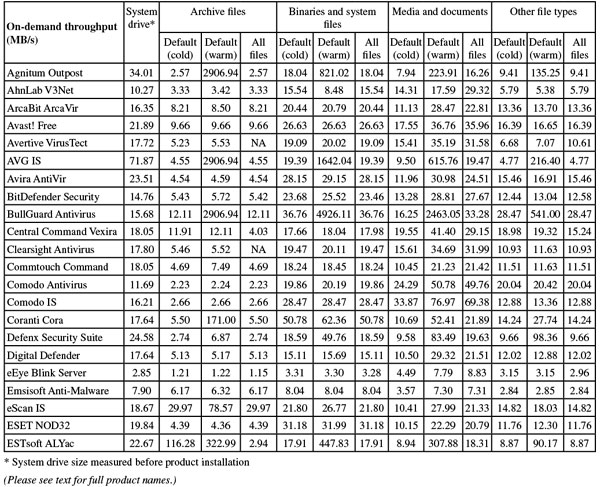

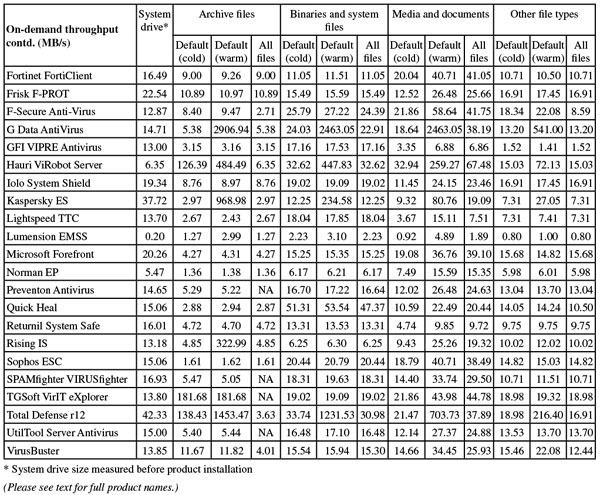

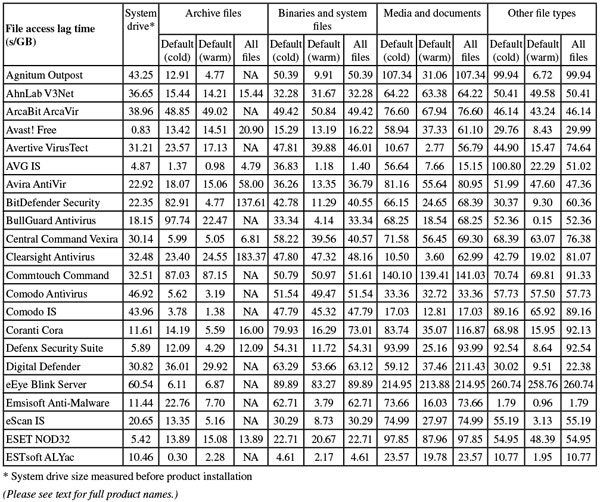

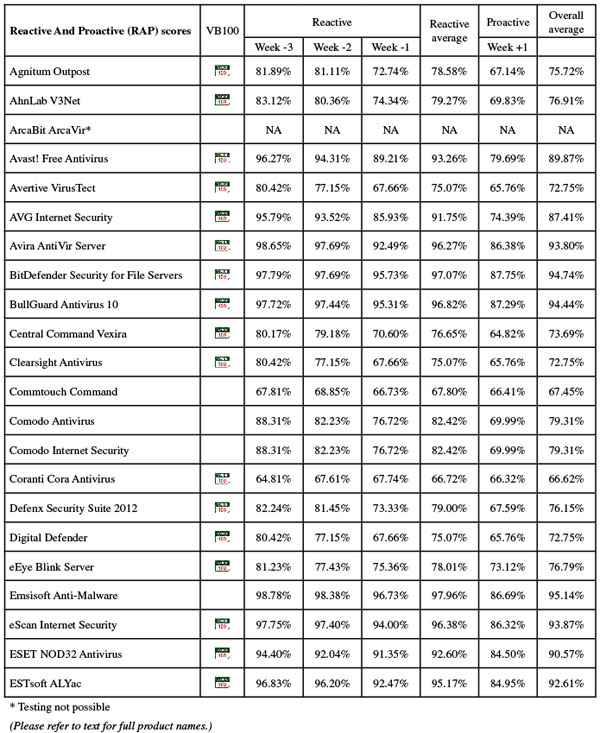

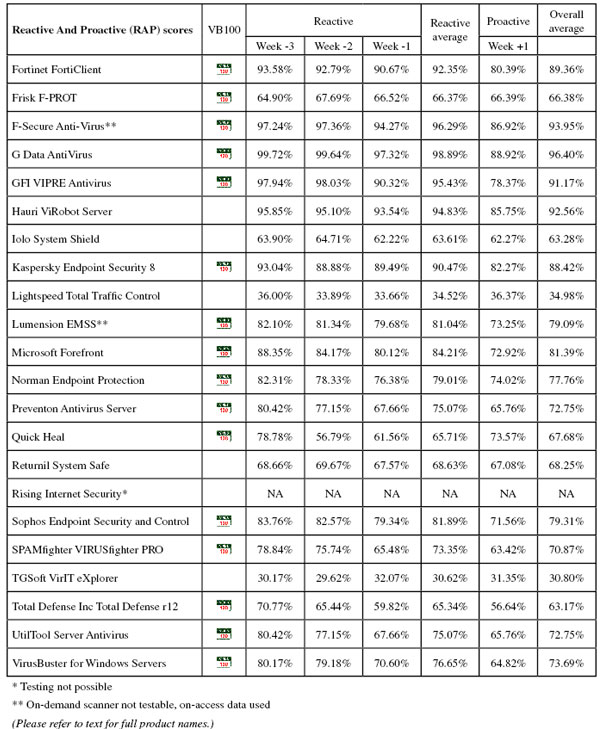

The product deadline was set for 24 August, and the test sets were built around this date. The RAP sets were compiled using samples first seen in the three weeks before and one week following the deadline, and the trojans set and the worms and bots set were both put together from items gathered in the month prior to that period. Little adjustment was made to the set of polymorphic viruses, and the clean sets saw the routine tidying up of older samples and expansion with a selection of new packages, focusing on business-related software in deference to the platform chosen for this month. Final figures showed the clean set weighing in at just over half a million files, around 140GB, with the four weeks of the RAP sets and the worms and bots set measuring around 30,000 samples each. The trojans set was a little larger at just over 150,000 unique files.

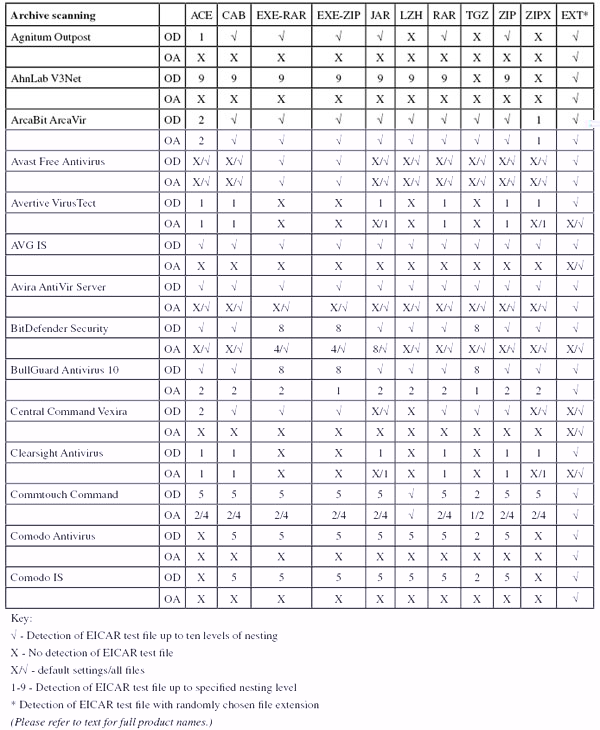

The WildList set was the first area to be affected by the recent changes to the way in which the list is compiled: the legacy naming scheme has been put into retirement, replacing the names that aim to reflect those used by anti-malware solutions with less human-readable reference IDs for each sample. This meant some tweaks to the way the samples are processed, and all samples were re-validated regardless of whether they were new appearances on the list or long-time regulars. A handful of true viruses were spotted in the set, including the nasty W32/Virut family which has been a regular for several years, causing a number of upsets with its complex polymorphism, and as usual the latest strain was replicated in large numbers to ensure detection approaches were fully exercised.

The new Extended WildList was also put through our validation processes, the samples mostly proving to be fairly unexciting trojans, although a few items appeared likely to be flagged only as greyware by some vendors. Most interestingly, a handful of Android samples were included in the list, a reflection of the increased targeting of mobile platforms by malware creators. These samples presented some problems for our testing approach, as many standard Windows security solutions are not designed to deal with such items. Even where detection for such items was available, we anticipated that many products would not pick up the files with their default on-access settings – many products are limited to checking a standard set of Windows executable file extensions and the files were mainly present in install package archives or as .dex format executables.

Product submission day brought no major surprises – a haul of 44 entries was around the anticipated number for a server test. A few major names were missing, and a handful of new products appeared for the first time, promising some interesting testing experiences. In a suitably sombre mood we embarked on the last comparative of its kind, preparing to bring an era to an appropriately solid end.

Version 7.5.1 (3791.596.1681)

First up on this month’s roster, Agnitum’s Outpost product rarely misses a comparative these days and generally performs well, although in recent tests we have noted some rather worrying lack of pace getting through our suite of tasks. The 95MB installer runs through a number of stages, mainly focusing on the firewall components which Agnitum specializes in, but gets through in reasonable time, completing with a reboot.

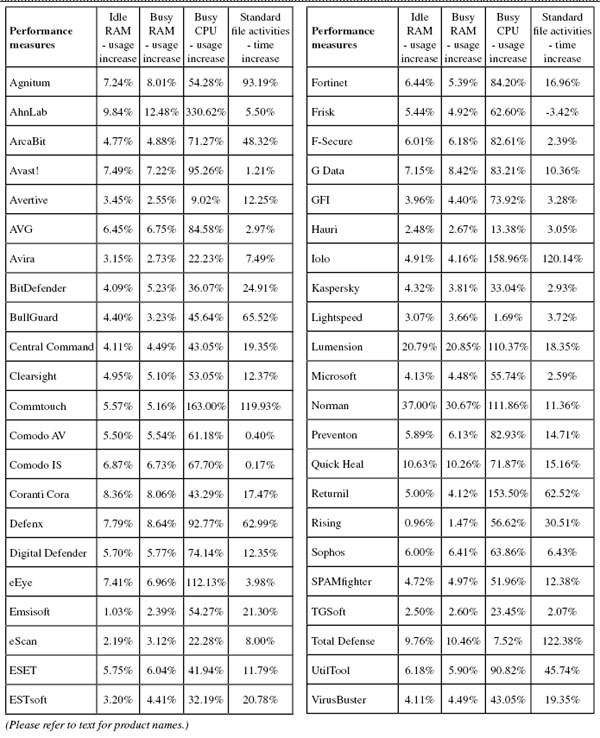

The interface is sturdy and businesslike without undue flashiness, providing a reasonable level of control for the anti-malware component, and for once tests ran through in decent time with no nasty surprises. Scanning speeds were not spectacular to start with but benefited greatly from some optimization in the warm runs, while on-access overheads were pretty heavy initially, again improving notably in the repeat measures. RAM usage was around average but CPU use was fairly high at busy times, with a pretty heavy impact on our set of standard tasks.

Detection rates were decent if again unspectacular, with solid coverage in most areas, declining fairly noticeably through the weeks of the RAP sets. In the new Extended WildList, a handful of items were not covered, although on demand most were in non-executable formats on this platform; there were a few more that were not blocked on access. The core certification areas were well handled though, and Agnitum earns another VB100 award.

The company has a solid record of late, with five passes in the last six tests, only the annual Linux test having been missed, and nine passes in the last two years. This month, all tests completed just about inside the 24-hour period planned for each product, with no crashes or other issues.

Please refer to the PDF for test data

Engine version 2011.08.23.93

AhnLab’s product is another which routinely gives us a few headaches, usually with the logging system which rarely stands up to our heavy demands. The installer weighed in at 204MB, and after a few brief questions took some time to run through its business, but no reboot was needed to complete the process. The interface is crisp and clean – a little confusing in places, but reasonably navigable – and provides a decent but not exhaustive level of fine-tuning.

Speeds were slightly below par on demand, and a little slower than average on access, with slightly high RAM use and a very high figure for CPU consumption at busy times – more than double the next highest measure this month. All this hard work paid off however, with our set of tasks zipped through very speedily.

Detection rates involved more hard work, with the unreliable logging system requiring us to split some of the tests into smaller chunks, and even then occasionally data could not be relied on and tests needed repeating. Scores were decent though, with reasonable levels in the main sets and a steady but not too steep decline through the RAP sets. A few items were missed in the new Extended WildList set, but on access at least these were all in non-standard formats not included in the usual list of scannable file types (mainly Android malware); somewhat oddly, several of these were spotted on demand, but a few others were ignored.

The clean set brought up the usual warnings on all OLE2 files containing macros, but no real false alarms, and with the WildList set properly handled a VB100 award is earned. AhnLab’s recent history is a little unsteady, with three passes from four entries in the last six tests; six passes and three fails from nine attempts in the last two years. This month, thanks to the extra work required to coax usable logs out of the product, testing took a day and a half – a little more than planned but not excessively so, with no serious issues other than the less than robust logging system.

Please refer to the PDF for test data

Version 11.08.3203.1

ArcaBit continues to bravely try its hand in our tests, despite a lengthy run of bad luck. The current version was provided as a 150MB installer, including all required updates, and after a fairly lengthy and silent pause at the beginning, the set-up process ran through a few rapid steps before another lengthy wait and finally completing. No reboot was needed.

The interface is fairly slick and attractive, although only basic controls are available, and in general it seemed fairly responsive (although trying to run one of the on-demand speed tests failed to produce the expected browser window, leaving the product completely frozen and requiring a reboot to get things back on track). Speed scores once gathered showed reasonable times on demand but quite heavy lag times on access, with low use of RAM and fairly high use of CPU cycles when busy, as well as a fairly high impact on the runtime of our suite of tasks.

Running the detection tests proved much more demanding, with scans repeatedly crashing out or freezing, and more freezes and crashes in the on-access tests. These were eventually completed after numerous retries, splitting the sets into smaller and smaller tasks. We found no specific samples that appeared to be causing the problems, implying that it was the sheer burden of our intensive tests that was bringing things to a crisis. On-demand jobs proved much more difficult, and several were abandoned after many days of hard work, with little to show for it.

On-access scores proved decent if unspectacular, and with the absence of any RAP scores or much else in the on-demand tests not much more can be said. The WildList and Extended WildList sets, being fairly small, were handled without difficulties, and both were covered excellently, with complete detection of both lists in both modes. In the clean sets however, a fairly large number of PDF files were flagged as potentially exploited, most of them sample documents included with Adobe products, and a handful of full false positives were also raised, including several items from major hardware brand Belkin and a component of a graphics package from Corel.

This was more than enough to deny ArcaBit VB100 certification once again, the vendor’s record now showing no passes from four attempts in the last six tests; one pass and six fails from seven entries in the last two years. With the wealth of stability problems encountered, and the lengthy if futile efforts made to get around them, the product took up several of our test systems for well over a full working week before we finally admitted defeat.

Please refer to the PDF for test data

Version 6.0.1253

Moving onto a product with a rather more reliable record in our tests, Avast has an extremely solid run of test success behind it, and with the arrival of the vendor’s latest and shiniest server version for the first time on the test bench we expected great things.

The 76MB installer runs through fairly quickly, looking slick and glossy, and needs no reboot to get most components working, although the sandboxing system is not fully active until after a restart.

Initial testing zipped through in good time, with some good speeds, medium RAM use and barely any effect on the speed of our set of tasks. However, CPU use was a little higher than average during busy periods. The on-demand detection tests blasted through with their usual rock-solid, no-prisoners attitude, and we moved onto the on-access tests expecting more of the same. In initial runs, however, we were surprised to see no sign of activity at all. Checking the settings, we observed that although the main homepage of the interface stated that protection was active, the page for the ‘Shield’ system reported its status as ‘Unknown’. Trying to restart things brought up an error claiming the shield was ‘unreachable’. Rebooting and reinstalling on a fresh system failed to resolve the issue, but a final reinstall, with a full licence key applied rather than the trial, got things moving at last. It seems there are a few bugs yet to be ironed out in this fairly new product.

Once the protection system was up and running properly, we re-ran the speed and performance measures but found the results barely changed. The on-access detection test powered through in record time, showing some superb detection rates. Similarly high scores were achieved throughout the on-demand tests, with some more excellent figures across the RAP weeks, declining only slightly into the proactive week. The Extended WildList set showed a handful of misses including several standard Windows executables, but the standard list was handled flawlessly, and with no false alarms in the clean sets Avast manages a splendid turnaround, achieving a solid pass having been on the verge of being dismissed as untestable.

The company’s strong record remains intact, with every test since 2008 entered and passed. Even with the problems encountered and the multiple reinstalls, the product’s extreme speeds over the larger test sets meant that testing took up no more than the expected 24 hours of system time.

Please refer to the PDF for test data

Version 1.1.69, Definitions version 14.0.179

As has become a standard feature of our tests in the last few years, we saw a smattering of entries this month from near-identical products based on the VirusBuster engine via the Preventon SDK. Avertive is by now one of the more familiar names on the ever-growing list. The 66MB install package ran through in just a handful of clicks, taking less than a minute to get set up with no reboot needed.

The interface hasn’t changed much since our first sight of it: pleasantly clear and simple with a basic but usable range of controls. Stability has always been a strong point with this range of solutions, and most of the tests ran through without issues, although the product interface did seem to freeze up for a while after running our performance tests. Logging remains pesky, with the defaults set to dump old data after just a few MB has accumulated and a registry hack is required to change this. However, long experience has taught us to remember to make this change before running any tests for which full results are required.

Speed measures showed some fairly average throughputs and lags, with low use of resources and minimal impact on our set of standard activities. Scores were much as expected for the underlying engine – decent and respectable throughout with a steady drop through the RAP sets. The Extended WildList set brought the only real surprise, with a fair number of items not spotted in either mode, but the standard list was expertly handled, and with no false alarms in the clean sets a VB100 is comfortably earned by Avertive.

In the last year the product has achieved three passes from four attempts; three from five entries in the last two years. Testing took just a little over the scheduled 24 hours, with only a single minor issue observed during the run.

Please refer to the PDF for test data

Version 10.0.1392

Another old-timer, AVG’s business version is a pretty familiar sight on our test bench, looking fairly similar to the home-user variants anyway. The installer measured 169MB, and despite needing only a few clicks took some time to get itself set up, although no reboot was required. The interface is a little angular and tends towards fiddliness in places, with some overlap of controls, but a good level of fine-tuning is available for those willing to dig it out.

Scanning speeds were perhaps a shade below average on first run, but blindingly quick on repeat attempts, with on-access overheads similarly improved after initial settling in. Performance tests showed medium use of memory and fairly high CPU use, but minimal slowdown in our set of tasks.

Running through the rest of the tests presented no problems, with everything running smoothly and stably throughout. Detection rates were very solid, with little missed anywhere, tapering off slightly in the later weeks of the RAP sets but still maintaining a pretty high standard even there. The core certification sets were handled impeccably, easily earning AVG another VB100 award, and even the Extended set was covered well, with only the handful of Android samples not alerted on.

AVG thus recovers from a slight stumble last time, now showing five passes and a single fail from the last six tests; ten passes, one fail and one non-entry in the last two years. With no problems noted during testing, everything was completed within the single day allotted to the product.

Scan engine V8.02.06.40, Virus definition file V7.11.13.189

As usual, long-time regular Avira submitted a full server edition for our server test, with the complete product (including updates) weighing in at under 70MB. The set-up process runs through a few stages, including the interesting step of excluding items related to a selection of major services from scanning, but still gets through in splendid time with no reboot needed to complete.

The interface uses the MMC system, but is well designed, injecting a little colour for better visibility and pulling most of the comprehensive configuration controls out to more familiar window styles. Operation was thus fairly simple and generally stable, although we did note the scanner hanging at one point while scanning the local system drive. This was easily fixed however, and did not recur.

Speeds were no more than average and no sign of any optimization was seen on repeat runs. On-access overheads were a little heavier than many this month. Resource use was low on every count though, with very little slowdown in our set of tasks.

Detection rates were as stellar as ever, with very little missed across all our sets – even the later weeks of the RAP sets were demolished with some style. The Extended list was handled cleanly on access, with just a single item not detected on demand. Closer analysis of the logs showed that this item appeared with a line simply saying ‘[filepath] Warning – the file was ignored’, where for everything else similar lines read ‘[filepath] DETECTION – [Detection ID] – Warning – the file was ignored’, hinting that some kind of log writing error was behind this one.

Meanwhile, the traditional WildList and clean sets were dealt with flawlessly, earning Avira another VB100 award for its cabinet. The vendor’s test history shows a perfect record of passes in the last two years, 12 from 12 entries. With no major issues and excellent scan times in the infected sets all tests completed comfortably within our 24-hour window.

Version 3.5.17.8

Another fully fledged server edition, BitDefender’s enterprise-ready solution came as a 182MB installer, including all required data. The installation process takes some time, although interaction is not excessive and no reboot is needed. The GUI is again based on the MMC system, makes good use of colour and provides a clear and usable interface to a very full selection of fine-tuning options.

Testing ran through fairly smoothly, although on a few occasions larger scans of infected sets failed, but log data could be retrieved thanks to a new system of writing out to disk periodically rather than storing everything in memory. Speeds were pretty reasonable, with on-access overheads perhaps a little above average, and while RAM and CPU use were fairly low our set of tasks ran a little slower than usual.

Detection rates in the infected sets were extremely impressive, close to perfect in most areas and dipping only slightly below 90% in the proactive week of the RAP sets. Both the standard and Extended WildLists were impeccably handled with nothing missed on demand, and only the handful of odd Android samples in the Extended set ignored on access. The clean set showed no false alarms, although the resulting report was perhaps a little confused; having found no infections but a hundred or so password-protected files in our set, the final results screen warned that ‘6134439 files could not be cleaned’.

A VB100 award is easily earned despite this minor oddity, and BitDefender’s record shows a full set of passes in the last six tests; ten passes, one fail and a single test skipped in the last two years. Despite a little extra interaction being required, testing powered through well within the expected time limit.

Version 10.0.190

Based on the BitDefender engine, BullGuard’s solution is a much more consumer-focused suite solution, with its 157MB installer needing only three clicks and blasting through in impressive time, with no reboot necessary to complete. The interface is a little quirky but after some exploration proves pleasantly usable, providing a decent level of controls for its multiple components.

Testing ran through fairly smoothly and very rapidly, with some decent scan times speeding up massively in the warm runs, and on-access times similarly impressive after initial checks. Performance stats showed very low RAM use, CPU around average and impact on our set of jobs was fairly high.

Detection tests showed the expected excellent scores though, demolishing all our sets with ease. The core certification sets were dealt with impeccably, and just a single item in the Extended WildList was missed, on access only. A VB100 award is thus easily earned by BullGuard, whose test history shows four passes from four entries in the last six tests; seven from seven tries in the last two years. With no issues noted and excellent speeds, all tests were out of the way after less than 24 hours of test machine time.

Product version 7.1.75, Scan engine 5.3.0, Virus database 14.0.183

Central Command has built up a solid record in the last couple of years, after setting up a successful partnership with VirusBuster. Central Command’s version of the product was provided as a 67MB installer plus a further 61MB of updates, and the set-up process was fairly complex with almost a dozen clicks required. It ran through fairly speedily though, requiring a reboot to complete.

Another MMC interface, this one is considerably less graceful than the others seen so far, with a clunky layout and less than easy navigation. A decent amount of control is available, but accessing and applying settings is far from fun.

Nevertheless we got through the tests without undue stress, with some decent scanning speeds and slightly high but not too intrusive overheads on access. RAM use was low and CPU use average, with impact on our set of tasks also not too heavy. Detection rates were reasonable if far from stellar, with decent coverage in most sets, dropping noticeably through the RAP sets. In the Extended WildList only the handful of non-standard file types were not covered on demand, while on access an additional half dozen executables were ignored. However, the traditional list was detected perfectly and with no false alarms in the clean sets Central Command wins another VB100 award.

This makes ten awards from the last ten tests, a perfect record since the company’s reappearance in the spring of 2009. With no issues emerging during testing and reasonable speeds, tests took just a little more than 24 hours to get through.

Version 1.1.69, Definitions version 14.0.179

Another Preventon/VirusBuster-based product, Clearsight’s 66MB installer ran through quickly with not too much interaction demanded and no reboot required. The now over-familiar GUI remains simple to operate and provides a good basic set of controls. It generally ran well, although we did note a freeze during the scan of our clean set, requiring the scan to be restarted.

Speeds were pretty decent, with overheads a little higher than expected, including a spike hinting at another temporary freeze somewhere along the way. RAM and CPU use were around average, with not too much impact on our set of tasks.

Detection rates were much as expected – reasonable but not exceptional, covering most areas pretty well. Again a fair number of Extended WildList samples were missed, but the core certification sets were properly dealt with and Clearsight earns another VB100 award.

The vendor now has four passes from five attempts in the past year. A few minor slowdowns were noted, but nothing too serious, and testing took only a little longer than the one day scheduled for the product.

Product version 5.1.14, Engine version 5.3.5, Dat file ID 201108241005

Commtouch provided its current product as a tiny 12MB installer, with definitions arriving separately as a 28MB archive bundle. Installation started with a brief silent pause, but then tripped through in just a few clicks with no need to restart. The interface remains minimalist and basic, but provides a usable level of controls and is generally simple to operate.

We noted no issues during testing, with scanning speeds a little below average and on-access overheads decidedly high. RAM use was low, but at busy times CPU use went through the roof, and the time taken to complete our set of activities more than doubled. Detection rates were distinctly unimpressive, with once again an odd hump in the middle of the RAP sets, other weeks staying remarkably stable. The Extended WildList contained a few undetected items, slightly more on access than on demand, as might be expected, but the main WildList set was well handled. In the clean sets, alongside a handful of alerts on items packed with Themida, a single item was flagged with a heuristic alert; on closer analysis, this appeared to be Welsh translations of Linux code, from a driver CD provided by major hardware manufacturer Asus. Apparently the code had sparked some emulation in the product engine, causing the heuristic rule to be tripped. This decidedly odd event was considered enough to deny Commtouch a VB100 award this month, despite a generally decent showing.

Such bad luck seems to be dogging the company of late, and it now has three passes and two fails from five entries in the last six tests; things seem to be looking up though, as the two-year picture shows four passes and four fails from eight attempts. With no stability problems, some slightly slow scanning speeds meant the full set of tests took about a day and a half to complete.

Product version 5.4.191918.1356, Virus signature database version 9868

Having put us through an epic waiting game in the last test, Comodo once again entered a brace of near-identical products, the first nominally being a plain anti-virus solution but still offering some advanced extras. The installation process was run online on the deadline day as no facility to provide offline updates was available; the 60MB installer ran fairly quickly, requiring a reboot, then drew down around 120MB of additional update data. The GUI is quite a looker: angular but elegant, with a warm grey and red colour scheme. A good range of controls are available, with some common items that were noticed as missing from the product in recent tests having been added to this latest version.

Running through the initial tasks proved simple, with scanning speeds pretty good apart from over archives (which are scanned to some depth by default), while the on-access overheads were a little above average, the exception again being archives which, quite sensibly, are not touched in this mode. RAM use was low and CPU use above average, but our set of activities ran through very quickly indeed.

Moving onto the infected sets, scans once again took enormous amounts of time to complete. Whereas in the past this presented more of an annoyance than a problem, this time we also saw scans completing but failing to produce any results, claiming the system had run out of memory (our test machines have a mere 4GB available, but this should be ample for a consumer-grade solution). On-access detection tests were similarly slow. After many days we eventually gathered a full set of data, showing the expected reasonable scores in most areas, with a steady decline through the RAP sets. The Extended WildList was handled decently too, with just a handful of items not detected, and with the clean sets handled without problems for a change, all looked good for Comodo. In the WildList, however, a single item was missed in both modes, and a VB100 award remains just out of reach this month.

This plain anti-virus version has entered three times in the last year, four times in total, and has yet to record a pass. With the extreme length of time required to get through our test sets, and the re-runs required after logging failed, the product took up one of our test machines for more than 14 full days.

Product version 5.4.191918.1356, Virus signature database version 9868

The full suite version of Comodo’s product installs from the same package as the plain anti-virus, with some extra options checked to include the firewall component and ‘Geek Buddy’ support system. This adds a few steps and a little more time to the installation process, and again a reboot and download of 120MB of updates is required. The interface is very similar, with just the extra module for the firewall controls, and again operation proved reasonably smooth and simple.

Scanning speeds and overheads were fairly similar to the plain product, scans slightly faster and overheads generally a little lighter, with RAM use and impact on our set of tasks again very low, CPU use a little above average. The infected tests took an age once more, and again we noted some issues with the logging and memory use. Detection scores were identical: respectable in most areas with some steps down through the RAP sets, and again the certification sets were mostly well handled with just a single item in the WildList set letting the side down, so no VB100 award could be granted.

This suite version has notched up one pass in the past, and now shows one pass from four attempts in the last year; one success from five entries in total.

Product version 2.003.00005, Definitions database v.10818

Coranti has made several appearances of late with its remarkable multi-engine product, but this month’s submission, we were informed, was an entirely separate product provided by the company’s Ukrainian spin-off. On the surface, however, it looked like business as usual, with a compact 12MB installer running through very quickly (offering English, Russian and Ukrainian as language options), then settling down to fetch 241MB of updates. This ran fairly quickly though, completing in less than 20 minutes, and the interface and user experience from there on was again similar to the mainline versions tested in the past. Simple, plain and unfussy, the GUI provides ample controls for the multiple engines and was generally responsive and stable throughout testing.

Speeds were surprisingly good, actually better than average in some areas and rarely much worse than the norm, while resource use and impact on our set of tasks were well within acceptable boundaries. Detection rates in the on-access tests were as excellent as might be expected, but when processing the on-demand logs the scores reported by our processing tools were so unexpected that we re-ran all the tests, only to find exactly the same results. Comparing the scores with other products this month, and looking more closely at the logging, the problem immediately became clear: somehow, despite being labelled as active in the interface, some of the engines included in the product were not operational in the on-demand mode – usually the situation in which one would expect to see the most complete effort being made. Apparently this bug was quickly pinned down and fixed, but sadly for Cora it means the scores this month will be much lower than previous records set by its sister product.

Nevertheless, detection rates remained reasonable, and the core certification sets presented no issues, comfortably earning Cora a VB100 award at its first attempt. Even with the re-runs we performed in a surprised state, testing did not over-run by more than half a day, and the absence of the expected stratospheric detection rates were the only issue noted.

Version 3733.575.1669

Switzerland’s Defenx has built itself up a nice history in our tests over the last year or so, and like its partner Agnitum has generally kept the lab team happy, apart from some rather disquieting slowness in the last couple of tests. Encouraged by Agnitum’s performance this month, we hoped for a return to the solid and speedy tests of the past.

The installer was a fairly compact 96MB, and ran through in good time with not too much demanded of the user, finishing with a reboot and some initial set-up stages. As hoped, running through the tests proved painless, with reasonable speed measures and resource use showing slightly high consumption of CPU cycles and a noticeable effect on our set of tasks. Detection rates were much as expected – decent and respectable without challenging the leaders – and the core sets were managed comfortably without problems, earning Defenx another VB100 award.

This gives the vendor five passes from five attempts in the last six tests, only the Linux test not entered, and nine passes from nine entries in total. Testing ran without issues and in good time, coming in just a few hours over the allotted 24.

Version 2.1.69, Definitions version 14.0.179

Another Preventon/VirusBuster offering, again with something of a history building up over recent months, Digital Defender’s product provided no surprises. The 66MB installer proved as fast and simple as expected, with no need to reboot, and the interface was clean and clear with a good basic set of controls. Testing ran through smoothly, with speeds a little on the slow side and overheads a little above average. Low use of RAM and low impact on our set of tasks was recorded, but fairly high CPU use at busy times.

Detection rates were unremarkable but reliable, with most areas covered to a decent degree, and the core certification sets threw up no surprises, earning Digital Defender another VB100 award. That gives the vendor four passes from five attempts in the last six tests, its recent record showing great improvements after an earlier run of bad luck. The two-year figure shows five passes and four fails from nine entries. With no issues to report and reasonable speeds, testing completed in no more than a day and a half, keeping reasonably close to our schedule.

Version 4.9.2

Blink’s main speciality is vulnerability monitoring, but with the Norman engine included for malware detection it has been a regular in our tests for some time. The submission this month was fairly large, with a 188MB installer supplemented by a 98MB update bundle, but the set-up process was fairly simple with a half-dozen clicks and a short wait while things were put in place, no reboot being required to complete. The interface is a sober grey for this server version, but little else seems different from the standard desktop client. Controls are reasonably accessible and provided to a respectable level of detail.

Testing took quite some time, with very slow speeds in the scan tests and pretty hefty slowdowns in the on-access measures, but our suite of tasks got through in decent time and RAM use was fairly normal, with fairly high CPU use at busy times. Detection rates were not bad, with decent coverage in most areas, dropping off steadily through the RAP weeks as expected. Despite a handful of items being missed in the Extended WildList, the main list was handled well, and the clean sets threw up a few suspicious alerts but no full false alarms. A VB100 award is duly earned by eEye.

The company now has four passes from five attempts in the last six tests, showing some recovery from a dark patch with four passes from nine entries in the last two years. With no problems other than the usual slow scan times – caused mainly by the in-depth emulation of the Norman Sandbox system – testing took a little more than two days to complete.

Version 5.1.0.16

Including the Ikarus scanning technology, the product formerly known as ‘A-Squared’ has become another OEM regular on our test bench. The current version came as a 120MB installer, with no further updates required, and installed in good time with no need to reboot. The interface is quirky and fairly limited as far as fine-tuning goes, but is fairly usable and generally responsive.

Scanning speeds were very slow, but overheads were not too bad, and RAM use was very low, with CPU use and impact on our suite of activities around average. On-demand detection tests ran well and showed some splendid scores, with excellent figures in the RAP sets, but on access things turned a little sour, the protection repeatedly falling over under the slightest pressure. Having nursed it through the smaller WildList sets, we wasted several days trying to coax some results out of it in the remaining sets, but eventually had to give up with time pressing.

Scores in the WildList sets would have merited an award, with fairly small numbers missed in the Extended list too, but in the clean sets a couple of false alarms were raised on items from Oracle, denying certification to Emsisoft this month. A bit of a dry spell for the vendor, with only one pass from five tries in the last six tests; two passes from eight attempts overall. With some serious difficulties with the on-access scanner, and much work trying to get it to stay on for long enough to gather results, the product sat on one of our test systems for over a week.

Version 11.0.1139.1048

The latest offering from eScan came as a 183MB installer including updates. Installation took some time to get through, with a fair number of clicks required and several fairly lengthy pauses. On completion no reboot is needed, but the product launches straight into an initial scan. The interface is an odd combination of simple and flashy, with the plain angular top half jarring slightly with the slick, glossy icons at the bottom; the configuration controls under the hood sensibly stick to a more sober approach, providing an excellent level of control in a simple and easily navigated manner.

Running through the tests proved pleasingly unproblematic, with speed measures showing some good scan times and fairly light overheads. Resource use and impact on our set of tasks was also impressively low. Detection rates, as we predicted having seen those of other products including the BitDefender engine, were superb, all sets swept aside with barely a miss, RAP scores guaranteeing a strong position in the top corner of our chart. The WildList, including the Extended list, was covered perfectly, and with no issues in the clean sets a VB100 award is earned without breaking a sweat.

Our test history shows a decent record for eScan with five passes from the last six tests; nine passes and three fails in the last two years. With no problems and good speeds, all tests were completed within the allotted 24-hour period.

Version 4.2.71.2, Virus signature database 6406

Still maintaining an epic streak of passes, ESET provided its latest product as a compact 49MB package, which installed with a simple process in good time. The interface remains attractive and pleasant to use, with good clarity in the main areas and a great level of fine-tuning under the covers. Operation was smooth and stable throughout testing, with no surprises or issues to report.

Speed measures showed decent scan times and reasonable overheads on access, with fairly low use of resources and fairly low impact on our suite of tasks. Detection rates were superb, with very solid coverage of all the sets, the reactive parts of the RAP sets showing only the slightest decline with a slightly more pronounced drop into the proactive set, but remaining impressive even here. The Extended WildList was handled impeccably, as were the core certification sets, and ESET easily adds another VB100 pass to its monster collection – the company can boast entering and passing every test since mid-2003. With no problems and decent speeds, testing fitted easily into the allotted 24 hours.

Version 2.5.0.12

The first newcomer this month, the ESTsoft name may be familiar to some thanks to the unfortunate association of one of the company’s other products with a mass hack attack in Korea a few months ago. The company’s security product should be much sounder though, being based on the BitDefender engine which has already proved itself more than capable of handling our test sets this month.

The product was sent in as a 153MB installer, which went through the usual set-up stages at a good rate, finishing with no need to reboot. The interface looks clean and simple; the ‘AL’ in the name is apparently Korean for ‘egg’, and a cartoony egg-shaped character adorns splash screens to lend the product a friendlier touch. Configuration areas are a bit plainer, providing a splendid degree of control, but the lab team felt a rather vague sense of unease navigating things, probably due to the occasional infelicity of translation.

Running the tests proved smooth and problem-free, the product zipping through our sample sets with good speeds and low overheads. Resource consumption was comfortably below average and impact on our set of tasks not too intrusive. Detection rates were excellent as predicted, the scanner effortlessly blasting through the sets with barely a miss. With the certification requirements met without difficulty, ESTsoft earns its first VB100 award on its first attempt. No problems emerged, and testing completed well within the allotted time.

Version 4.1.3.143, AntiVirus engine 4.3.374, Virus signatures version 11.77

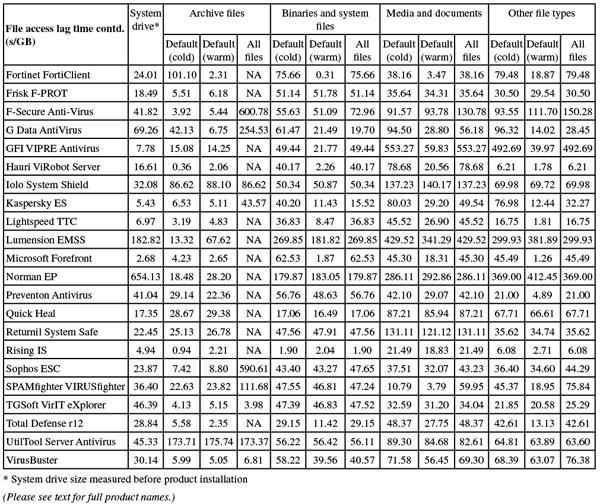

A rather more familiar name, Fortinet has shown some impressive improvements of late as FortiClient turns up its default heuristics to great effect. The product remains compact at just 12MB, with definitions somewhat larger at 129MB. The installation process is rapid and straightforward, not taking long but needing a reboot to finish things off. The interface is plain and businesslike, providing all the controls required and with a simple and responsive user experience.

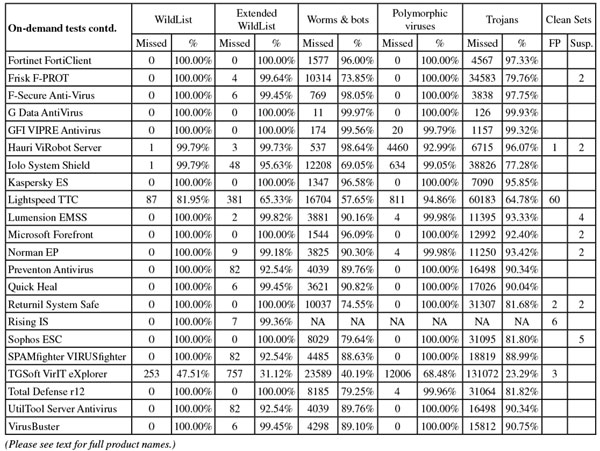

Testing was a pleasure, with no issues to report. Scanning speeds were not too fast, but on-access measures showed some good optimization after initial checking. Memory use was fairly low, CPU use fairly high but not outrageous, while impact on our set of tasks was perhaps a fraction above average. Detection rates were very solid though, with impressive scores everywhere but a fair-sized drop noticeable going into the final week of the RAP sets. The Extended WildList was fully covered, as was the more traditional list, and with no issues in the clean set a VB100 award is comfortably earned by Fortinet.

The long view shows four passes and a single fail from five attempts in the last six tests, the annual Linux test having been skipped, and the same pattern repeated in the previous year, adding up to eight passes from ten entries in the last two years. No problems were noted and testing ran through in good time, well within our scheduled slot.

Please refer to the PDF for test data

Antivirus version number 6.0.9.5, Antivirus scanning engine version number 4.6.2

Frisk’s product seems to have remained much the same for many years now, the 31MB installer and accompanying 27MB update bundle being applied in a very familiar fashion, with only a few steps and a few seconds waiting before a reboot is demanded. On restart, the product seems rather slow to come online, refusing to open its GUI for almost a minute. Once it appears, the GUI is icily minimalist, with very few controls, and some of those that are provided are distinctly odd, but it remains pretty simple to operate and responded well during most of our tests.

Speed measures showed some reasonable rates, with on-access overheads a little above average; RAM use was fairly low, CPU use medium, and impact on our set of tasks barely noticeable.

On running the detection tests, scans died a few times (as we have come to expect) with the product’s own error-reporting system sometimes allowing jobs to continue, while other times nothing short of a reboot was able to get things moving again. Detection rates were, as expected, fairly mediocre but reliable across the sets, with a handful of more obscure file types missed in the Extended WildList but no problems in the core certification sets, and Frisk earns another VB100 award without fuss.

The vendor’s recent test history is good, with five of the last six tests passed; the longer term view is a little shakier, with eight passes and four fails in the last two years. The only issues observed were scan crashes when running over large infected sets (unlikely to affect real-world users). With some fairly slow speeds processing polymorphic samples, testing took close to two days to complete, but didn’t knock us too far off schedule.

Version 9.00 build 333, Anti-Virus 9.20 build 16040

Another cold climate vendor, F-Secure sticks to the icy white theme, the enterprise solution’s 45MB installer (supplemented by 151MB of updates) running through quite a few stages but needing no reboot to complete. The interface is cold and stark, running in a browser and suffering the usual responsiveness and stability issues associated with such an approach. Configuration is fairly limited and at times inaccessible. On several occasions scans would not start, the interface getting itself tied into knots and needing a reboot to resolve matters.

We eventually got things moving along, with speed tests showing some reasonable scan times but fairly heavy overheads on access. RAM use was not high though, and CPU use not exceptional either, with a very low time for our suite of activities. Moving on to the detection tests, things ran through fine on access, but on-demand scans repeatedly dropped out without warning or explanation. Some in-depth diagnostics showed that specific files seemed to be tripping the scanner up. Trying to filter these files out over numerous scans proved too much work for our hard-pressed team, and in some areas we resorted to using the on-access scanner results only.

These showed some splendid scores, as expected from the results of other products that include the BitDefender technology, with most sets demolished and the Extended WildList only missing those odd items in non-executable formats. The main WildList and clean sets were handled impeccably, and a VB100 award is earned by F-Secure.

The last six tests show five passes from five entries; nine passes from nine attempts in the last two years, a solid record. With much work having gone into re-running crashed scans, the product took up at least four full days of test machine time this month. However, there were no issues that would be likely to emerge in everyday, real-world use.

Program version 11.0.1.44, Virus signatures: Engine A: AVA 22.1766, Engine B: AVL 22.315

With both engines included in this product already having demonstrated strong performances this month, and the product’s multi-engine rivals either absent or hobbled by bugs, hopes were high for another stellar showing from G Data. The enterprise product came in two parts, with an administration system provided as a 684MB installer and a client subsystem pushing 200MB. Setting up the admin component took quite some time and much interaction, with various dependencies resolved along the way, but the client part ran much more rapidly and with limited interaction. A reboot was required to get everything active.

With options available to offload responsibility for running scans and tweaking settings to the client, testing itself was fairly straightforward. The product’s usual rapid warm scanning speeds kicked in during the speed tests, shooting off the top of the scale in the on-demand graph. On-access overheads as usual were reasonably light with the default settings, noticeably heavier with scan settings turned up high. RAM and CPU use were a touch above average, but our set of tasks were got through at a good speed.

Detection rates were as awesome as expected. The RAP sets were destroyed, even the proactive week pushing close to 90%, and the core certification sets were brushed aside in short order. G Data comfortably earns another VB100 award, the vendor’s history showing four passes from five entries in the last six tests; eight from ten in the last two years. With no issues, solid dependability and decent speeds, all tests completed on schedule in less than a day.

VIPRE software version 4.0.4210, VIPRE engine version 3.9.2500.2, Definitions version 10257

Fully settling after the transition from Sunbelt to GFI, VIPRE has become a regular in our tests and seems to crop up ever more regularly as an OEM engine. The current version is a slimline 13MB main installer, with 72MB of updates also required, and the set-up process runs at average speed with a standard set of steps to follow. The interface is clear and bright without overdoing the styling, providing a pretty basic set of controls – which are a little less than clear in places, but generally not too difficult to operate.

Scanning speeds were rather slow and on-access overheads a little on the high side, but use of RAM and impact on our set of tasks were both fairly low, with CPU use at busy times a little above average. Running the detection tests was as tiresome as ever, with logs stored in memory until the completion of scans which frequently died with a message stating that the scan ‘failed’ for no clear reason, leaving no usable data behind. On-access testing was also a little fiddly, with the product’s use of delayed checking meaning that our opener tool was regularly allowed access to a file for a few moments before the detection kicked in. This meant having to cross-reference the product’s rather unfriendly logs with our own data, and in the case of the on-demand tests re-running jobs many times with ever smaller subsets of our full set.

Eventually results were gathered however, and they showed some pretty impressive scores, dropping away fairly steeply in the proactive week of the RAP sets but remaining decent even there. The Extended WildList was covered flawlessly on demand but showed a few misses on access (possibly items considered merely grey as well as the handful of Android samples). The main WildList was handled well though, and with no false positives to report, a VB100 award is duly granted to GFI after great labours on our part.

The product’s test history shows five passes from five attempts in the last six tests; seven from eight attempts in the last two years. With the slow scanning and multiple re-runs required, not to mention the hard slog of turning logs into usable data, more than two full weeks of test lab time were taken up – many times the fair share we hoped each product would need.

Version 6.0.0, Engine version 2011-08-24.00(8965391)

This is another product including the BitDefender engine, but one which routinely seems to have difficulties where other products using the same technology do not. Hauri’s ViRobot is a sporadic entrant in our tests and last achieved a pass way back in 2003. The current version was provided as a 243MB installer including all updates, and ran through a number of stages, including the offer of a pre-install scan and some configuration options, taking quite some time to finish but needing no reboot. The interface is quite attractive – elegant and simple on the main screens but providing a lot of good controls under the covers. There is also some useful information provided, such as system resource use monitors presented in a pleasantly clear manner.

Running tests went pretty smoothly at first, with scanning speeds good to start with and super fast on repeat runs, on-access times likewise starting off pretty decent and becoming almost imperceptible once warmed up. Resource use was very low and our suite of activities ran through in splendid time. On-demand detection tests ran well, showing the excellent scores expected of the underlying engine in most areas, but the on-access tests proved a little more tricky, the protection system buckling under heavy pressure and apparently simply shutting down silently halfway through jobs. After several attempts we got a full set of results, tallying closely with the on-demand scores, with very impressive numbers in most areas.

Eagle-eyed readers will have noted the words ‘in most areas’ above, and the solid detection sadly did not extend quite broadly enough. Three samples were missed in the Extended WildList, all of them standard Windows malware, while a single item went undetected in the main WildList set. With a couple of false positives in the clean set too, Hauri does not quite reach the required standard for VB100 certification.

The vendor has had no luck from four attempts in the last year, and no entries before that for quite some time, but things seem to be gradually improving and we would expect to see a pass sometime soon, assuming we continue to see regular participation. The only problem encountered was the collapse of the real-time protection under heavy load – perhaps an unlikely situation in the real world, but it set our testing back somewhat and with the many re-runs required the product needed almost a week of lab time to get everything completed.

Version 4.2.4

Another product insisting on installation and updating online on the deadline day, Iolo provided a tiny 450KB download tool which proceeded to fetch and install the main product. The installer brought down measured 48MB, and an option was provided to have it saved locally in case it was needed again in future. After the standard install steps, the set-up process was pretty fast, completing in less than a minute, then a reboot was requested before updates ran, again not taking too long to complete.

The interface has a fairly standard design and looks slick and professional, providing a surprisingly high degree of control under the hood for such a clearly consumer-focused solution – although there was still much absent which one would hope to see in a business product. The absence of a context-menu scan option, almost universal these days in desktop offerings, was rather a surprise.

Operation was fairly smooth and simple though, and speed tests ran through without issues, showing some reasonable scan times but fairly high overheads on access. RAM use was quite low but CPU activity was very high and our set of tasks seemed to take forever to complete. Moving on to the detection tests, we once again could find no option to simply log detections. Instead we opted to remove everything detected, but this seemed less than reliable. Logs are stored in an extremely gnarly binary format, and cannot be exported to file from the product interface, so we contacted the company asking for information on how to decrypt them – the fourth consecutive test where we have made such a plea for information. At least this time we got some response, claiming someone was looking into it, but when several weeks later we still had received no further help decrypting, we had to resort to some rough manual ripping apart of the data. Combining this with information on which files had been left on the system after several runs gave us some usable data which seemed close enough to the on-access scores and those of other products based on the same Frisk engine, that we assumed it to be reasonably accurate.

Detection rates were generally fairly mediocre, with respectable but not impressive rates in most areas; RAP scores place the product towards the lower end of the main cohort on our scatter graph. The Extended WildList showed a handful of misses, and in the main list a single item seemed to go undetected in the on-demand mode only. Surprised by this, and assuming there had been some error in our brute-force log parsing, we repeatedly rechecked the sample, but found each time that no alert was produced and the file was left in place. Trying to copy the file elsewhere meant the on-access component kicked in and immediately alerted on it and removed it. Thus, despite an otherwise clear run through the clean sets, no VB100 award can be granted to Iolo this month.

The product’s test history shows two passes from four attempts in the last six tests, with one additional entry in the last two years not adding to the tally of passes. Although testing ran in reasonable time, the apparent inconsistency of the on-demand scanner’s response to detections and the bizarre nature of the product’s logs, coupled with the company’s apparent inability to help out with this, meant that more than a week was taken up by this product.

Version 8.1.0.524

Back to a company with a long and pretty glorious history in our comparatives, but one which has also had some trouble describing the operation of its logging system in recent tests, Kaspersky provided its latest, shiniest enterprise solution this month, a late beta of the new corporate desktop product. The installer was a fair size at 127MB, with updates provided as a mirror of a complete update system supporting a range of products and thus also fairly large. The set-up process was a little slower than some this month, but still ran through in reasonable time with not too much demanded of the user, and needed no reboot to complete.

The interface is a considerable achievement: simple, elegant and highly efficient, providing the superb depth of fine-tuning we expect from Kaspersky without sacrificing usability. Some areas seem familiar from related products, while others look all new but are immediately clear and easy to operate. Testing thus moved along nicely.

Speeds were a little sluggish in initial scans, but the warm runs powered through much more rapidly thanks to some smart optimization. On-access overheads were distinctly on the light side. Resource use was low and our set of standard activities ran through very quickly indeed. Detection tests were mostly completed with ease, although a few parts of the RAP sets did seem to cause some rather lengthy hangs, and we re-ran them just to be safe, seeing no repeat of the slowdowns. Scores were very solid across the sets, with a very good showing in the RAP sets, tailing off only slightly into the latter weeks, while the Extended WildList was perfectly handled on demand, only the Android items being ignored by the real-time component. The core certification sets presented no difficulties at all, and a VB100 award is comfortably earned by Kaspersky.

The company’s history is a little diverse given its wide product range, but counting this fully fledged suite as part of the Internet Security continuum, we see four passes from five attempts in the last six tests; nine from ten in the last two years. With good reliability and splendid speeds, even with a few re-runs to double-check things, all tests were completed within the allotted 24 hours.

Version 8.01.04

Another newcomer to our comparatives, we have been in contact with Lightspeed Systems for some time but have only now managed to get the product included in a test. The company’s main market is apparently in the US education system; its product is entirely the company’s own work with no OEM technology included. The installer submitted was a slim 13MB package, which we installed and updated online on the deadline day. The set-up process was fast and simple, with only a couple of clicks required and all done in under half a minute with no request for a reboot.

With installation complete, we tried opening the interface to no avail. A reboot sorted this out however, and we got our first look. The layout looked decent, clear and simple to use, and provided a reasonable degree of control, but updating seemed to take some time, the progress bar halting on 10% and staying there for over an hour. Another reboot and retry of the update did the same, but leaving it going finally produced results and we were able to continue.

Using the product was generally no problem, and we produced speed and performance stats showing fairly slow on-demand scanning speeds but very light overheads on access and low resource use, with little effect on our suite of tasks. Closer examination showed that this was probably mainly a result of the real-time protection not being applied on read by default, and our detection tests were thus run by copying files around the system rather than using our usual opener tool. In the larger tests, stability under pressure was rather suspect, with scans taking a long time to return control after completion. This proved to be the case even when scanning clean samples only, with the scan of our clean sets leaving the system completely unresponsive for over four hours. It appears that the product records all unknown files as well as those flagged as detected – presumably as part of some sort of whitelisting system – so perhaps these odd slowdowns are due to trying to feed this data back to some remote server, inaccessible from our lab environment.

Results were eventually obtained, and were a little disappointing, with a fairly large number of misses in most areas. RAP scores were poor, but fairly even across the weeks at least, and in the WildList set quite a number of items were undetected. In the clean sets there were also quite a few false alarms, including files from major brands such as Adobe and Acer as well as components of popular software like Alcohol, Azureus/Vuze, the Steam gaming system, and EASEUS Partition Master. All of this means, of course, that Lightspeed will need to do quite a bit more work to meet our certification requirements, although it seems to have made a fairly decent start. With the slow scans and long recovery times, testing took around a week to complete.

Scan engine version 6.7.10, Antivirus definition files 6.7

Another product from the whitelisting field, Lumension has a previous pass under its belt thanks to the Norman engine bundled with its heavyweight corporate solution. However, it made no friends in its last appearance with a distinctly difficult interface and some odd approaches to implementation. Set-up from the 198MB bundle included the provision of several dependencies, including Microsoft’s Silverlight, .NET framework and an upgrade to the installer system, which took around 15 minutes, requiring a reboot before we could continue with the installation proper. On attempting this, we discovered yet more requirements, including the activation of IIS and ASP, which had to be done via the server management system. Moving on again, we ran through a more standard install process which, after gathering some information, took around 20 minutes to complete.

The product interface is web-based and suffers the expected flakiness, sluggish response, frequent freezes and session timeouts we have come to associate with such control systems. An often bewildering layout, complex procedures for performing simple tasks, and a scanning system which lacks a simple ‘scan’ option were all additional frustrations that led to considerable wailing and gnashing of teeth in the test lab before we were done with this one. On-demand speed tests were performed using the only option available: an entire system scan with the option to exclude certain areas. To get just the folders we required scanned, we had to add a lengthy list of such exclusions using a fiddly XML format, but we soon got the hang of it and seemed to be moving along. We then discovered a bug in the exclusion system, which meant that once an item had been added to the exclusion list it could not be removed; although the interface reported successful removal and showed a shorter list, at the end of a scan it had reappeared in the list hidden away in the scan log. This meant multiple retries, taking huge amounts of time as the scanner seemed to check each file on the entire system in turn, only to ignore most as the partition they were on was excluded.

We did eventually persuade it to scan through our speed sets alone, but the additional overheads meant the times were extremely slow, barely even visible on our graph. It seems that the scanner design overheads were not the sole cause of this slowness though, as the on-access lag times were extremely heavy too, with high use of memory and processor cycles throughout and a noticeable impact on our suite of activities.

Detection tests were similarly troublesome, with several attempts once again hampered by the misfiring exclusion system, and those which did get started regularly stopped silently after only a fraction of the job, with no reason given for the abort. Having gone away to the VB conference leaving it running over the full sets once more, we returned to find data for only the first few days of the first set, and yet more retries were needed. With time pressing, the RAP data was harvested using the on-access component, which seemed to be more reliable but may not have provided the full detection capabilities of the barely usable on-demand scanner.

In the end, detection rates were respectable, with a good showing in the main sets and slightly lower scores through the RAP sets, although some of the scores here were actually slightly higher than those recorded by other entries using the same engine. In the Extended WildList just a couple of Android items in archive formats were ignored, and the standard list was covered well. With no false alarms in the clean sets, Lumension just about scrapes a VB100 award, having made our lives extremely difficult once again. From two entries the company now has two passes, and thanks to the design of the product as much as the bugs in the scan set-up system and the scanner itself, testing took up one of our test machines for more than three full weeks.

Product version 2.1.1116.0, Signature version 1.111.469.0

Back to a company with a slightly more standard approach to GUI design, Microsoft’s corporate product was submitted as a svelte 20MB installer with just 64MB of additional updates, and the set-up process was simple and speedy with no reboot required. The interface is pretty familiar to us by now, looking clean and sharp, providing only basic controls and occasionally confusing with its use of language but proving generally fairly usable.

Scanning speeds were pretty reasonable, and overheads light, with low RAM use and impact on our set of activities, and busy CPU use around average. Detection tests ran through smoothly if a little more slowly than the ideal, showing some solid scores across the board with a very even downward slope through the RAP sets. The Extended WildList set saw everything blocked on access, while processing on-demand logs using our standard method showed two items not detected. Closer analysis showed that these were in fact both alerted on, one as a ‘Hack Tool’ and the other labelled ‘Remote Access’. These ‘grey? type detections are not counted under our current scheme but are likely to be included once the new set becomes part of the core requirements – although we would hope that such borderline items will be excluded from the list at an earlier stage in future. The core certification sets were well handled, and a VB100 award is comfortably earned.

Forefront’s test history is solid if sporadic, the company alternating entries with its consumer offering. From three entries in the last six tests, three passes have been attained; five passes from five tries in the last two years. Testing ran without incident but detection tests were a little slow, all jobs completing in around two full testing days.

Program Manager version 8.10, Scan engine version 6.07.10, Antivirus version 8.10

Yet another of our old-time regulars, whose engine has already cropped up a few times this month, Norman’s current product came as a 131MB installer including all updates, and set up in half a dozen standard steps, running at average speed and needing no reboot. The interface remains rather clumsy and less than reliable, prone to regular freak outs, freezes and general flakiness – including occasionally going all blurry, much to our consternation. The layout is often confusing, and only manages to provide a limited selection of configuration controls even after considerable searching. We also noted that it regularly ignored our instructions, deleting and disinfecting items having explicitly been told to log only.

Nevertheless, tests proceeded reasonably well, with the expected crawling scan speeds and hefty on-access overhead. RAM and CPU use were pretty high but our set of tasks did not take too much longer than normal to get through. Detection tests were completed without too much effort, showing some pretty decent scores in most areas, with the Extended WildList dealt with fairly well on access, only a couple of non-standard file types ignored, while on demand a handful of executables were omitted. Looking deeper, we saw that the missed files were all consecutive, hinting that some chunk of the files had been passed over, or a problem with logging had occurred. The main WildList and clean sets were handled properly, earning Norman a VB100 award.

The vendor’s test history shows five passes from the last six tests – a great improvement on the previous year as we see only six passes from 11 attempts over the last two years. With considerable flakiness of the interface, the actual protection seemed to be pretty stable, but slow scanning speeds added to testing time, and all jobs completed after about three days.

Version 5.0.69, Definitions version 14.0.179

The source of a number of entries this month, Preventon promised few surprises. Setting up the product from its 68MB installer – which ran through in good time with no reboot – and using the interface, which is simple and clear with a good basic level of controls, proved something of a breeze. Logging remains the main headache with this product, with registry tweaks required to prevent it dumping records after a few MB.

Speed tests showed slowish scan times and lightish overheads, with resource use perhaps a shade above average and impact on our set of tasks likewise slightly on the high side. Detection tests ran through without problems, showing some reasonable scores across the board, a fair few misses in the Extended WildList but no problems in the main list or clean sets, earning the product another VB100 award without fuss.

The vendor’s test history shows four passes from five entries in the last six tests; six from eight attempts in the last two years. Testing was all plain sailing, taking just a little more than the 24 hours allotted.

Version 13.00 (6.0.0.1)

Quick Heal’s ‘Server Edition’ came as a 228MB bundle, which ran through in quite good time for the size, with no reboot needed. The interface is pretty much identical to consumer versions we’ve seen in recent tests: clean and modern with a few slight quirks of layout, but fairly good usability and clarity.

Testing ran through very nicely, with scanning speeds not too slow, notably higher over executables than elsewhere, and overheads a little high, but lighter on executables. Resource use was unremarkable with most measures around average. Detection rates were pretty strong on demand, noticeably lower on access, but RAP scores were rather unpredictable, dipping after a decent start but then climbing upward again into the latter weeks. The Extended WildList showed a handful of ignored items, but the core certification requirements were met without difficulty and a VB100 award is duly earned.

The company’s history in our tests goes back almost a decade, and has been pretty strong of late, with six passes in the last six tests; 11 passes and a single fail in the last two years. Testing ran smoothly this month with no major errors, all completing on schedule in under a day.

Version 3.2.12918.5857-REL14

Another OEM product, this one uses the Frisk engine by way of Commtouch alongside its own speciality, a virtualization and reversion system to undo unwanted changes to a system before they can be permanently committed. The installer measured 38MB, with updates in a separate 28MB bundle. The set-up process was speedy with little attention required, although a reboot was demanded at the end.

The interface looks pretty decent and provides simple access to a basic range of controls (once again context menu scanning is notable by its absence), and it ran pretty stably throughout testing. Scanning speeds were slow and overheads pretty high, with low RAM use but high use of CPU and a fairly heavy effect on the runtime of our standard activities, as noted with other products based on the same technology. Detection rates were unremarkable, apart from a very steady base rate across the RAP sets, and the traditional WildList set was well handled. In the clean sets, as feared, the same file from a driver CD caused an alert, and a second clean file was also wrongly flagged as infected. This was enough to deny Returnil certification this month despite an otherwise decent showing.

The product now has three passes from five tries in the last six tests; four passes and three fails overall. Testing was a little slow but there were no serious issues, all completing in around a day and a half of test lab time.

Version No.: 23.00.41.88

Rising’s latest product gave us some problems last time around but just about scraped a pass, and we hoped to see some improvements in stability and general sanity this month. The installer measured 89MB including all updates, and ran through fairly speedily although with a fair few clicks required. The interface is distinctly unusual, both in layout and general look and feel, with a sparkly colour scheme and a home page dominated by performance graphs. Navigation can be baffling at times, mainly thanks to oddities of translation, but a reasonable level of configuration appears to be available for those able to fathom its mysteries.

Initial testing proceeded well enough, with slow scanning speeds apart from the warm scan of archives, light overheads and low RAM use, CPU use around average and impact on our set of tasks around average. Detection tests were rather painful though, with once again huge issues with the logging and reporting system. Scans repeatedly ran along merrily racking up thousands of detections, only to come to an end with a message stating ‘No virus was founded’ [sic] and ‘Your computer security is safe’. Picking as much as we could out of logs, and re-running scans carefully multiple times, we still found no reliable data, and once again had to give up under time pressure. Thus no RAP scores or detection rates for several of the main sets were available, but at least we managed to get through the WildList sets, which showed solid coverage, including complete detection of the Extended list on demand. In the clean set, however, a number of false alarms were noted, including an item from Sun labelled as a dropper trojan, and as a result no VB100 award can be granted to Rising this month.

The company’s test history shows sporadic entries in our comparatives, with one pass and two fails in the last six tests; three of each in the last two years. With all the reporting issues and multiple retries needed, testing ran for over two weeks before we gave it up as a lost cause.

Sophos Anti-Virus 9.7.4, Detection engine 3.22.0, Detection data 4.68G

The current version of Sophos’s standard Windows desktop product comes as an 89MB installer, with additional incremental updates measuring just a few hundred KB. The install process has perhaps a few more stages than average but is done with in good time, needing no reboot to complete. The interface is stark and functional but fairly simple to operate, providing the full range of controls one would expect from an enterprise-grade solution, including extreme fine-tuning in a super-advanced area. Testing ran through without any nasty surprises.

Scanning speeds were a fraction above average, but so were on-access overheads, with resource use and impact on our set of tasks unexceptional. Detection tests showed some respectable if not stellar scores, with flawless coverage of the Extended WildList. The traditional list and clean sets were also handled impeccably, comfortably earning Sophos a VB100 award.