2011-10-01

Abstract

There has already been extensive research into the plethora of tricks used by contemporary malware and executable protectors with the purpose of breaking debuggers and emulators. Unfortunately malware authors are aware of such research efforts and the countermeasures introduced by engine developers. They are also pretty much aware of the capabilities of AV emulators, and are ready and prepared to deploy tricks to overcome them. Gabor Szappanos looks at a small cross-section of the threat landscape.

Copyright © 2011 Virus Bulletin

If you are a regular reader of Virus Bulletin, you will be aware of the excellent and extensive research undertaken and written about by Peter Ferrie on the plethora of tricks used by contemporary malware and executable protectors with the purpose of breaking debuggers and emulators [1], [2], [3], [4], [5], [6], [7], [8], [9], [10], [11], [12], [13], [14], [15]. Now, if you are also a developer of an anti-virus engine, you ought to have done your duty, learned all these tricks and made sure your emulator won’t fall for them. You might then expect that your engine would be able to go through the external layers of protection and get to the heart of the malicious code without any difficulty. Unfortunately, nothing could be further from the truth – the real fun is just beginning.

The authors of the high-profile malware families are also aware of our industry’s research efforts and the countermeasures introduced by our engine developers. They are also pretty much aware of the capabilities of AV emulators, and are ready and prepared to deploy tricks to overcome them.

In this article I will analyse only a minuscule cross-section of the threat landscape, both in time and in terms of malware family representation. Only three malware families will be described, and only a few months will be covered for each. This is hardly a complete picture, but it will give an idea of how much pressure the bad guys put on AV developers, and the level of the arms race that engine developers have to face on the battlefield. I am certain that even within this limited scope, several different variants will have gone unnoticed by us (as we mainly observe those that our scanner didn’t detect), so the difficulties outlined in this article should be considered to be significantly underestimated. Even with all these limitations, the length of this article well exceeds that of a usual Virus Bulletin article – which gives an indication of the full weight of the problems we are facing every day.

All three malware families are active today. When selecting the particular observation periods I picked a time range when we could pretty confidently identify and follow the regular development within the family.

Systematic attempts to fool emulators are nothing new. They date back at least five years, to the mass appearance of Tibs variants. The earlier ones only used FPU instructions at the entry point. The FPU infrastructure and instruction set was omitted by AV emulators in order to save development effort and memory space, thus successful emulation was rendered impossible within a few instructions.

Several variants used fake API calls with invalid arguments just to check that the appropriate error condition was returned. These API calls included all sorts of non-core system dlls from gdi32 to wsock32, such as: AbortDoc, BeginDeferWindowPos, CIsinh, closesocket, CombineRgn, DdeUnaccessData, DeleteUrlCacheContainer, DragQueryFile, EndDialog, EndPath, ExtractAssociatedIcon, GetTapePosition, GetTimeFormat, InternetErrorDlg, InvertRgn, PropertySheet, RealizePalette, ShFileOperation, StartPage and WantArrows. These variants started appearing at the end of 2006 and we have seen the occasional sample as late as 2008.

Later on, numerous variants of Swizzor (mostly active from 2008–2009) became proficient at squeezing so many fake loops into the top layers that going through them took tens of millions of CPU instructions, easily exhausting emulators’ limitations. Due to performance issues, emulators in scan engines are not allowed to run indefinitely (as that would slow down the system – which users generally don’t tolerate well).

These and several other families would be well worth a detailed analysis, but instead I will focus on more recent developments.

The observation period for these samples spanned only one month, between 11 April 2011 and 11 May 2011. However, I should note that newer variants following the same structure and using the same tricks have continued to appear on a regular basis ever since.

This one is really nasty; the top layer defence uses callback functions and undocumented tricks. It is very clear that the authors of this family were actively looking for (obviously) undocumented leftovers in CPU registers after Windows API calls. These functions use the stdcall calling convention, in which registers EAX, ECX and EDX are designated for use within the function. EAX is used for the return value; the state of the ECX and EDX registers is supposed to be undefined, not to be relied on. However, after some extensive research work, the authors of Cycbot found several cases where the values of ECX and EDX are defined, and they relied on this fact to distinguish real Windows systems from incompletely emulated ones.

The general structure of the top-level obfuscation layer can be divided into four distinct stages, as illustrated in Figure 1.

The first stage features an appropriately selected API call (in our example FindFirstVolume), and both EAX and ECX register values are used in the subsequent calculation. EAX is clear: it should hold the return value from the function. But ECX is not supposed to contain anything specific.

Further investigation revealed that, upon return, ECX points to an address in kernel32 where a C2 (RET) instruction is located. The malware code checks the presence of this byte at the memory location pointed to by ECX.

How on earth does ECX get to point to an address with this very special location and content? It turns out that the commonly used exception handler unwind procedure in the kernel does not clean up the ECX register. The kernel code is the following:

.text:7C869F9C E8 6A 85 F9 FF call SEH_unwind .text:7C869FA1 C2 08 00 retn 8 ... .text:7C80250B SEH_unwind proc near .text:7C80250B 8B 4D F0 mov ecx, [ebp-10h] .text:7C80250E 64 89 0D 00 00 00 00 mov large fs:0, ecx .text:7C802515 59 pop ecx .text:7C802516 5F pop edi .text:7C802517 5E pop esi .text:7C802518 5B pop ebx .text:7C802519 C9 leave .text:7C80251A 51 push ecx .text:7C80251B C3 retn .text:7C80251B SEH_unwind endp

Here, the exit point from the procedure is 7C869FA1. This is pushed onto the stack during the call preceding the unwind, where it is popped into ECX and used in a push/ret combination to return to the exit point. However, ECX is not restored to the original value there, as it was not originally saved at the beginning of the FindFirstVolume call. So the ECX register will contain the address of the 7C869FA1 exit point from the kernel procedure when returning to the user code.

In this particular example, due to the invalid buffer address passed, the FindFirstVolume call returns with the INVALID_HANDLE_VALUE error code in EAX, and this value is also multiplied by the expected dword at ECX to determine the condition to continue (but only the lowest byte is used in the evaluation as, depending on the function, the return code set after the C2 byte may differ).

It is pretty obvious that for this kind of arithmetic calculation any API function that returns -1 as an error code on an invalid argument, and which leaves the exit point address in ECX on return, would be sufficient. And indeed, the malware authors must have done their homework – within the observation period the following API functions filled this role: FindFirstVolumeA, lstrcpynW, PrivMoveFileIdentityW, DosPathToSessionPathW, QueryDosDeviceW, ReplaceFileW, WaitForMultipleObjectsEx, WaitForMultipleObjects and WaitNamedPipeA (and I am sure this is not the full list).

In a slightly different scheme other APIs were used, namely lstrcpyA and FillConsoleOutputCharacterA. In these cases, only the on-error zero return value is checked.

The core element of the second stage is another API call. From tracing the code it turns out that, upon return, the malware expects 0x07 in the EDX register and uses this in calculating the exact address of the callback function needed in the third stage. This was at first a great surprise for me, as EDX is not supposed to contain anything on return (except when returning 64-bit values, which is clearly not the case here). How does the magic value appear in this register? To find out, we need to go into the depths of the kernel code.

A process handle (usually 3, but in one case 1) is passed over to an API call, in our case GetProcessId. This leads to ZwQueryInformationProcess which (since such low process ID numbers are not used on a running Windows system) results in error code STATUS_INVALID_HANDLE (0xC0000008). This status code is passed further to ntdll: RtlNtStatusToDosError, which is supposed to convert this value to an error code using an ordered table of error code mappings. This is an incomplete table and does not contain all of the possible codes, rather a range of status codes is mapped to the same error code, and the table contains the starting point and length of each range. The compare stops when a value is found that is higher than the looked up code.

In the neighbourhood of the specific error code there are only two codes: STATUS_UNSUCCESSFUL (0xC0000001) and STATUS_INVALID_PARAMETER (0xC000000D). The table also contains a delta value – it is my guess that this represents the length of the interval that maps the error code in the table. If this is the case, it would mean that error codes from 0xC0000001 to 0xC0000001+delta are mapped to the same system error code. During the process the distance of the queried error code from the lower neighbour in the table is calculated in the EDX register – in this case it will be 0xC0000008-0xC0000001=7. This value is then compared to the delta length of the interval, and if it is smaller, the correct mapping is found. But this is not important for the malware, the important part is the fact that the EDX register is not cleaned by either of the kernel functions, remaining there when the code returns to user mode, and it is used by the malware in the line:

.text:00402F06 3B 04 2A cmp eax, [edx+ebp]

EAX should contain NULL after an invalid passed handle; EBP points to the top of the stack. [edx+ebp] points to the highest byte of the dword at the original stack (on reaching the entry point) – which is the return address to kernel32, pushed there when starting the process in CreateRemoteThread. This highest byte of the kernel return address is not supposed to be 0, which is the condition that the malware expects to find.

Obviously, for this phase any other function that returns with STATUS_INVALID_HANDLE, calls RtlNtStatusToDosError and does not restore EDX to its original state, would be sufficient. It turns out that the malware authors limited their scope to functions that call NtQueryInformationProcess with an invalid process ID, after which a call RtlNtStatusToDosError follows. The result is the following observed list of used functions: GetProcessId, CheckRemoteDebuggerPresent, GetProcessHandleCount, GetProcessAffinityMask, GetProcessWorkingSetSize and GetPriorityClass.

This stage was stable in the observation period; no changes were observed. The offset at which to continue execution is calculated in EAX (once there, the value of EDX, already used in Stage 2, is used again), and it is passed as the callback address to the function EnumResourceTypesA, which calls this callback function internally at some point. The possible reason why this part never changed could be that it is difficult to find a Windows API call that calls a user-mode callback even if the passed parameters are invalid.

Stage 4 is fairly simple, building up in EAX the address of the next (herein not discussed) stage, which features spaghetti code.

Stage 4 is reached as the result of the callback invocation from within EnumResourceTypesA, as discussed in the previous section.

At offset 00402F3A in our example the malware code overwrites the return address from EnumResourceTypesA on the stack, knowing the exact amount of stack space used by the API function at the point when the callback was invoked. Thus, upon finishing the EnumResourceTypesA call, the execution will not resume at address 00402F1F as would normally happen without this change, but due to the modified return address on the stack, the spaghetti code will be reached.

Emulating this stage correctly is a real challenge, as the emulator should produce exactly the same stack layout and allocation as the original API function, and invoke the callback providing the same conditions.

In this family the analysed anti-emulation code is not around the entry point, but some time later in the execution flow. The entry code features numerous different and repetitive do-nothing API calls, with no expected effect and no expected return values. That should not pose a problem for any decent emulator.

What will be a problem is the following code, taken from one of the variants:

.text:00406A9D 68 68 53 40 00 push offset LibFileName ; “version.dll” .text:00406AA2 FF 15 7C C2 40 00 call ds:LoadLibraryA/GetModuleHandleA .text:00406AA8 89 C1 mov ecx, eax ... .text:00406AEA 41 inc ecx .text:00406AEB 8B 01 mov eax, [ecx] ... .text:00406B62 81 F8 75 10 FF 75 cmp eax, DW_SIGNATURE .text:00406B68 0F 85 3C FF FF FF jnz loc_406AAA

The trojan loads a standard system library using either LoadLibrary or GetModuleHandle. This call should return a handle, which is eventually the base load address of the dll file. The memory image of the system library is then scanned, looking for an identification dword. In the case of Win32 emulators, it is often (if not always) the case that only a small subset of exported functions are actually implemented – the rest are only empty dummy procedures. Thus the code bytes that are present in the real system dlls will not be present in the emulated libraries. In this case, the malware will search through the allocated memory space of the emulator dll (which should result in an exception when reaching the end of the allocated memory block), and aborts the execution.

How do the malware authors know which dwords will not be in the emulator dlls? They may have determined this either by a trial-and-error method, using their own multi-scanner systems, or by dumping the targeted scanner’s memory image, and finding the emulated dlls within the dump. Both options are viable.

During the observation period between 8 March 2010 and 17 June 2010 five dlls were used for this purpose. Shell32.dll was used on 24 occasions, and there were 19, 14 and nine occurrences respectively of shlwapi.dll, gdi32.dll and version.dll. In an early variant, a single appearance of msvcp60.dll was observed, but this was abandoned later. Within these dlls 55 different byte patterns were searched, some of which were used in the context of multiple libraries, thus resulting in 67 different combinations. These are summarized in the table shown in Figure 2.

Altogether, a new combination was released approximately every other day. If you want to beef up your emulator to follow this workload, you must be able to release new emulator updates within a day. Otherwise, by the time an update is added to handle the latest trick it will be obsolete as the malware authors have already switched to a new one. The development effort required to overcome these tricks is trivial, simply consisting of adding the look-up dwords into the emulator dlls, and even the location of these bytes is not important. The real issues are the necessary QA procedures around releasing emulator updates, which make the task close to impossible.

This is a very widespread and populated family, with plenty of slightly or very different variants. Our observation period covers more than three months, from 30 December 2010 to 13 April 2011. Needless to say, the development did not stop after that – new versions are still flooding in as I write this article.

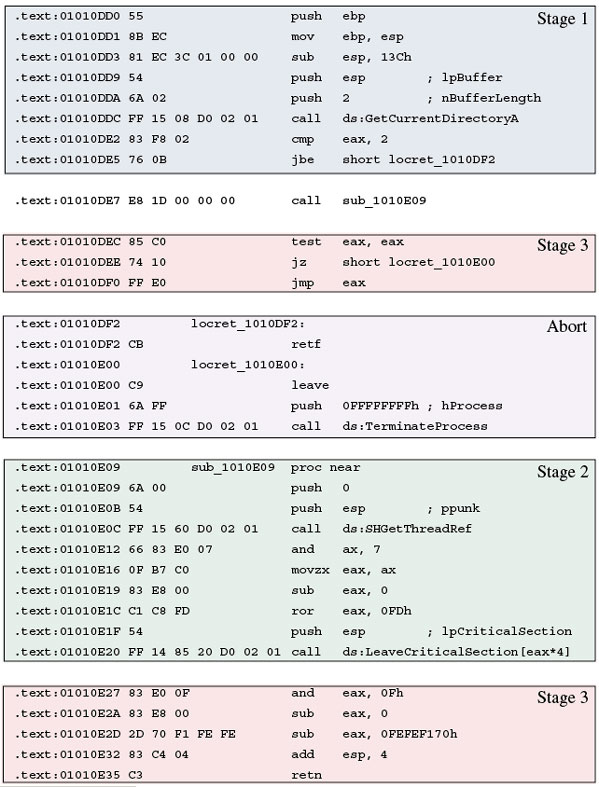

Four different stages were identified in the structure of the top level anti-emulation layer, as illustrated in Figure 3.

Figure 3. Three different stages were identified in the structure of the top level anti-emulation layer of Trojan.WinWebSec.Gen.

Right at the start of execution a Windows API function is called, but not in the way we are used to seeing in malware anti-emulation code (which would be passing invalid arguments to the selected API function, and checking the returning error condition).

In the process of evolution this family goes way beyond that; the existence of some basic operability of the selected function is required. In this section I will enumerate the observed variations, which range from simple cases to more complicated ones, using actual code snippets taken from malware variants that have been observed in the field. Each code snippet represents a new strain of the malware.

The ‘simple’ tricks only check if the emulator reacts to abnormal conditions as a normal Windows installation does – usually taking the form of inappropriate return values.

.text:01010DD9 54 push esp ; lpBuffer .text:01010DDA 6A 02 push 2 ; nBufferLength .text:01010DDC FF 15 08 D0 02 01 call ds:GetCurrentDirectoryA .text:01010DE2 83 F8 02 cmp eax, 2

The two-byte buffer length passed to GetCurrentDirectoryA is obviously too small to hold the current directory path; in this case EAX contains the required buffer length on return, which should be a lot higher than (but definitely not equal to) two bytes. This basic operation is checked by the code and aborts if the incomplete emulation does not change the value of EAX.

GetCurrentDirectoryA is obviously not the only API function that behaves this way – a fact that was not overlooked by the malware authors. A couple of other API calls were observed in this family: GetLogicalDriveStringsA and GetTempPathA.

.text:0100C759 55 push ebp .text:0100C75A FF 15 00 D0 02 01 call ds:SetHandleCount .text:0100C760 3D 02 04 00 00 cmp eax, 402h .text:0100C765 73 01 jnb short loc_100C768

SetHandleCount is an obsolete call – it does not really set the handle count nowadays, rather returns the handle count provided as an input in EAX. This basic operation is checked by the code and aborts if the incomplete emulation does not change EAX.

.text:01022FFD FF 15 00 90 06 01 call ds:GetUserDefaultUILanguage .text:01023003 85 C0 test eax, eax .text:01023005 74 14 jz short locret_102301B

This is a simple case, similar to what we were used to in the good old days – the malware checks the UI Language code. Any non-zero return value is acceptable. GetSystemDefaultLCID was also used in a similar fashion.

.text:0100D058 54 push esp .text:0100D059 FF 15 04 C0 02 01 call ds:QueryPerformanceCounter .text:0100D05F 58 pop eax .text:0100D060 3D 02 04 00 00 cmp eax, 402h .text:0100D065 73 01 jnb short loc_100D068

The value of the high-resolution performance counter is returned by this function, but not in EAX, rather stored in a LARGE_INTEGER, pointed to by the argument passed on call. As it is used here, it will appear on the top of the stack. Both this and the fact that it should not be an unreasonably low number is checked by the malware. It is possible that some emulator implementations used a low value for the counter, which was exploited by the malware.

.text:01071CE2 8D 84 24 00 02 00 00 lea eax, [esp+600h+Buffer] .text:01071CE9 50 push eax ; lpBuffer .text:01071CEA 68 00 02 00 00 push 200h ; nBufferLength .text:01071CEF FF 15 A0 50 0A 01 call ds:GetLogicalDriveStringsW .text:01071CF5 A9 03 00 00 00 test eax, 3 .text:01071CFA 75 ED jnz short loc_1071CE9

This function fills a buffer with strings that specify valid drives in the system. As lpBuffer is supposed to be a Unicode char buffer, and normal drive specification (‘c:\’ for instance) consumes 4*2 bytes including the terminating zero byte, the buffer length returned in EAX must be a multiple of it. Thus the lower two bits of the result must be zero.

.text:01071C65 50 push eax ; lpSelectorEntry .text:01071C66 6A FF push 0FFFFFFFFh ; dwSelector .text:01071C68 6A FE push 0FFFFFFFEh ; hThread .text:01071C6A FF 15 38 D1 09 01 call ds:GetThreadSelectorEntry .text:01071C70 85 C0 test eax, eax .text:01071C72 75 F1 jnz short loc_1071C65

When this variation was first applied, it was a simple case – invalid pointer and handle is passed, the call does not succeed, the return value is zero. However, about 16 days later another variant appeared that used a further twist:

.text:0040D5BD 81 EC 7C 01 00 00 sub esp, 17Ch .text:0040D5C3 54 push esp .text:0040D5C4 1E push ds .text:0040D5C5 6A FE push 0FFFFFFFEh .text:0040D5C7 FF 15 08 C0 42 00 call ds:GetThreadSelectorEntry .text:0040D5CD 8B 44 24 04 mov eax, [esp+4] .text:0040D5D1 3D FF 03 00 00 cmp eax, 3FFh .text:0040D5D6 73 01 jnb short loc_40D5D9

I have to confess that this is the only code fragment that I could not resolve. Whatever is happening on the stack (the result of which is checked at offset 0040D5CD), it happens within a SYSENTER call. I suspect that a LAR instruction is executed somewhere there, and the 0xcff300 value is placed on the stack used by the kernel code, which during the cleanup part of GetThreadSelectorEntry is copied to the stack of the user code. The malware code does not expect a specific value, rather anything but 0x3ff, which could be a default fill value of some emulator.

.text:004192ED 81 EC 7C 01 00 00 sub esp, 17Ch .text:004192F3 54 push esp .text:004192F4 FF 15 08 50 44 00 call ds:GetStartupInfoA .text:004192FA 8B 44 24 08 mov eax, [esp+8] .text:004192FE 3D FF 03 00 00 cmp eax, 3FFh .text:00419303 73 01 jnb short loc_419306

After the call the STARTUPINFO.lpDesktop value is checked from the returned structure (which should point to the string Winsta0\Default, but this fact is not used), and this pointer should look ‘real’, which in this context means having a reasonably high memory value.

.text:00415ABC 54 push esp .text:00415ABD FF 15 48 10 44 00 call ds:QueryPerformanceFrequency .text:00415AC3 8B 44 04 FF mov eax, [esp+eax-1] .text:00415AC7 3D FF 03 00 00 cmp eax, 3FFh .text:00415ACC 73 01 jnb short loc_415ACF

This is a double check. On return from QueryPerformanceFrequency, EAX should contain 1 if there is a high-resolution performance counter, and the pointer to store the value to is passed to the call (actually in this case the top of the stack – it should contain a value that is high enough not to be a fake value used by an emulator).

In a couple of variants appearing 19 days later, even higher values (8000h and 800h respectively) were checked, perhaps as a result of a subsequent emulator tweak.

.text:0100D757 81 EC 7C 05 00 00 sub esp, 57Ch .text:0100D75D C7 04 24 01 00 01 00 mov dword ptr [esp], 10001h .text:0100D764 54 push esp .text:0100D765 FF 15 08 30 02 01 call ds:GetNativeSystemInfo .text:0100D76B 8B 44 24 08 mov eax, [esp+8] .text:0100D76F 3D FF 03 00 00 cmp eax, 3FFh .text:0100D774 73 01 jnb short loc_100D777

The placing of 10001h on the stack has no effect. After the call returns [ESP+8] points to absolute offset 0x10000, which is the bottom of the virtual memory allocated to the process, and contains the Windows environment variables, where a decent value is expected.

.text:0040EA5A 68 00 01 00 00 push 100h .text:0040EA5F 8D 74 24 40 lea esi, [esp+40h] .text:0040EA63 56 push esi .text:0040EA64 68 00 00 40 00 push 400000h .text:0040EA69 FF 15 20 00 44 00 call ds:VirtualQuery .text:0040EA6F 83 F8 1C cmp eax, 1Ch .text:0040EA72 74 01 jz short loc_40EA75

The malware checks that, in accordance with the specification, on return EAX contains the size of the filled structure (sizeof(MEMORY_BASIC_INFORMATION)).

In these cases the malware actually checks if the targeted API call really performs the action that it is supposed to.

.text:00410DE9 81 EC 7C 05 00 00 sub esp, 57Ch .text:00410DEF C7 04 24 FF 03 00 00 mov dword ptr [esp], 3FFh .text:00410DF6 54 push esp .text:00410DF7 FF 15 30 10 44 00 call ds:InterlockedIncrement .text:00410DFD 2D FF 03 00 00 sub eax, 3FFh .text:00410E02 8B 44 04 FF mov eax, [esp+eax-1] .text:00410E06 3D FF 03 00 00 cmp eax, 3FFh .text:00410E0B 73 01 jnb short loc_410E0E

This is the point where dark clouds start gathering above the heads of even those who thought that the previous tricks were just a piece of cake. So far, all the analysed malware samples expected that calls provide appropriate environment and proper return values. From there on, these functions are actually expected to implement the original functionality of the targeted API function. In this case, InterlockedIncrement increments the value pointed to by the passed pointer. Furthermore, this incremented value must be present both in the mentioned pointer and in EAX. These simultaneous conditions are checked with the code above.

.text:004045EC C7 06 45 4C 4F 00 mov dword ptr [esi], ‘OLE’ .text:004045F2 C7 46 04 00 00 00 00 mov dword ptr [esi+4], 0 .text:004045F9 56 push esi .text:004045FA FF 15 C0 30 43 00 call ds:CharLowerA .text:00404600 81 3E 65 6C 6F 00 cmp dword ptr [esi], ‘ole’ .text:00404606 75 07 jnz short near ptr locret_40460D+2

You should feel cold sweat running down your neck when looking at the code above. Yes, CharLowerA has to actually transform the string pointed to by ESI properly to lower case. From here on, it is not enough to perform input-output checks in emulated environments, but at least partial implementation of the functionality is required.

And this is not the end of the road.

.text:00404823 56 push esi .text:00404824 6A 20 push 20h .text:00404826 8D 74 24 50 lea esi, [esp+50h] .text:0040482A 56 push esi .text:0040482B 8D 44 24 40 lea eax, [esp+40h] .text:0040482F C7 00 6F 4E 69 5F mov dword ptr [eax], ‘_iNo’ .text:00404835 6A 04 push 4 .text:00404837 50 push eax .text:00404838 6A 40 push 40h ‘MAP_COMPOSITE .text:0040483A 2E FF 15 4C 70 43 00 call cs:FoldStringA .text:00404841 81 3E 6F 4E 69 5F cmp dword ptr [esi], ‘_iNo’ .text:00404847 75 07 jnz short near ptr locret_40484E+2 .text:00404849 83 F8 04 cmp eax, 4 .text:0040484C 74 03 jz short loc_404851

FoldStringA maps one string to another, performing the specified transformation. In the case of MAP_COMPOSITE the accented characters are transformed to decomposed characters. Since there are no accented characters in the source buffer, in reality it is a simple string copy operation, with the number of copied characters being returned in EAX. Both the success of the copy operation and the proper return value are checked by the malware.

The same trick was observed in a different variant using the twin FoldStringW function, the source string being ‘_’ (Unicode).

During analysis of the samples, I found a couple of tricks that did not fit in the usual schemes of the family.

.text:0102FDE7 55 push ebp .text:0102FDE8 8B EC mov ebp, esp .text:0102FDEA 81 EC 3C 01 00 00 sub esp, 13Ch .text:0102FDF0 B8 A0 82 60 83 mov eax, 836082A0h .text:0102FDF5 85 45 04 test [ebp+4], eax .text:0102FDF8 74 11 jz short locret_102FE0B

The malware reads the return address back to the kernel stored on the stack. It checks it against a very specific value. I suspect that this is a default value used at the time (4 January 2011) in the emulator of a profiled anti-virus engine.

.text:0102DD53 A1 0C 93 06 01 mov eax, ds:GetModuleHandleA .text:0102DD58 A9 95 00 72 03 test eax, 3720095h .text:0102DD5D 74 F4 jz short loc_102DD53

The malware queries the address of the GetModuleHandle function. It checks it against a very specific value. Highly irregular code with a highly irregular load address. I see only one reason why the GetModuleHandle address would be even close to the expected value – it has to be targeted against the load address of a very specific Win32 emulator used at that time (11 January 2011) in the emulator of a profiled anti-virus engine.

The overview of this stage is the following:

.text:01010E09 6A 00 push 0 .text:01010E0B 54 push esp ; ppunk .text:01010E0C FF 15 60 D0 02 01 call ds:SHGetThreadRef .text:01010E12 66 83 E0 07 and ax, 7 .text:01010E16 0F B7 C0 movzx eax, ax .text:01010E19 83 E8 00 sub eax, 0 .text:01010E1C C1 C8 FD ror eax, 0FDh .text:01010E1F 54 push esp ; lpCriticalSection .text:01010E20 FF 14 85 20 D0 02 01 call ds:LeaveCriticalSection[eax*4]

At first glance two subsequent API calls are utilized in this stage. The first one receives invalid arguments (usually a zero pointer), and the resulting error code is used in an arithmetic calculation of an index value. This index value is used in indexing the actual API function from within the import table of the malware executable. As it turns out, in all of the cases the indexing will point to the first import of the dll that appears after kernel32.dll in the import table. Moreover, as it turns out, it is always the same API that is used in the first call.

To make this trick successful, the malware author has to control the import table, which is not difficult. I see no reason why any decent Win32 emulator could not handle this import table indexing trick properly – if they are able to load an executable, they have to interpret the import table properly. So if the emulator goes through the first call successfully, and is able to provide the expected return value, it should handle the second call as well. Therefore I don’t consider the second call to be an anti-emulation trick (it does not present any greater hurdle), it is more like an anti-analysis trick.

Only the appropriate return value is required for the emulation of this stage. The table in Figure 4 lists the corresponding API function/expected return value pairs that we found in this malware family.

This is essentially the same in all variants. Using the return value from the last call in Stage 2, a series of arithmetic calculations is performed, and finally an absolute memory address is calculated in EAX. The malware jumps there.

.text:01010E27 83 E0 0F and eax, 0Fh .text:01010E2A 83 E8 00 sub eax, 0 .text:01010E2D 2D 70 F1 FE FE sub eax, 0FEFEF170h .text:01010E32 83 C4 04 add esp, 4 .text:01010E35 C3 retn ... .text:01010DEC 85 C0 test eax, eax .text:01010DEE 74 10 jz short locret_1010E00 .text:01010DF0 FF E0 jmp eax

To summarize the requirements for successful emulation of contemporary malware families, your emulator must be: rich (i.e. recognize essentially all possible API calls and handle error conditions), fat (i.e. must contain typical and common byte sequences), feature-rich (i.e. a certain subset of API calls must be correctly implemented), and occasionally clumsy (i.e. leave leftovers in CPU registers). In short, a full – more precisely realistic – Win32 emulation is needed. If you are in the lucky position of already having that, you don’t need to read further than this point.

But it is not enough to do it right, you also have to do it fast.

The table below summarizes the mean time between the significant changes in each family. In this context ‘significant’ means something that is likely to require a change in the emulation, and that we were able to observe in the appearance of the new variant. As mentioned, it is certain that I have missed several variants in each family. Therefore the average time between the appearance of variants is overestimated – in reality they should appear somewhat more frequently. Nevertheless, even these overestimated numbers look scary enough. For me, at least.

| Family | Mean time between variants (days) | Variants | First variant | Last variant |

| Backdoor.Cycbot | 2.31 | 13 | 11/04/2011 | 11/05/2011 |

| Trojan.Codecpack.Gen!Pac | 1.64 | 77 | 12/03/2010 | 16/07/2010 |

| Trojan.Winwebsec.Gen | 3.25 | 32 | 30/12/2010 | 13/04/2011 |

Table 1. Average update times in the three families.

Overall, it seems like this is a lost battle. But not necessarily. In fact, there are a couple of solutions – though full of pain. I am afraid there is no easy way, but after a few years fighting viruses, one gets used to that.

Let us assume for the sake of argument that our purpose, as bizarre as it sounds, is to provide proactive defence against new malware threats.

If your research-development-QA-release cycle regarding emulator enhancement for issues detailed in previous sections (which are essentially minor changes from a development point of view) is shorter than a day, then the situation is not hopeless. Then you would end up covering about half of the distribution campaign of the given variants, still providing measurable proactive protection for the second half of the campaign. Achieving such a short cycle is far from easy. Depending on the nature of your emulator-based detection definitions, changes in the emulation environment may occasionally change the execution flow of executables, thus unexpectedly breaking totally unrelated definitions. Another disadvantage is that the first day or so of the distribution campaign has to be handled with one of the traditional reactive methods.

If the development cycle time is longer than a couple of days, you need a different approach. One can use the actual Windows environment with a behaviour blocking technology which, since it utilizes the real Windows environment, is fully compatible (if the user has the environment that the malware expects). But even then this solution is not a pre-execution defence, as the malware has to be executed.

Another possible solution is to use the real Windows environment in a sandbox to extend the emulator with the missing features. Careful design and implementation is required in order to contain the malware within the safe boundaries.

I know that pattern matching is a dead technology (see [16]). It has been for 20 years. But in some cases, it can be handy. Ironically, in this particular case it produces longer lasting definitions than emulator tweaking, since the basic structure of all three families’ code is pretty much constant, only the particular API functions are changed. In fact, this was the reason why several different members of these families went unnoticed by us: our definitions caught them, and because we have so much to do, we mostly look at samples that we don’t detect. This does not mean that our definitions did not have to be changed. They did, many times. But not as frequently as the new emulator tricks appeared.

Overall, we can state that the most profiled malware families of the day push AV engines to their limits, and sometimes even a little over them. We can’t stop for a moment. But this is our job. Not everyone can be a rocket scientist.