2010-08-01

Abstract

With another epic haul of 54 products to test this month, the VB test team could have done without the bad behaviour of a number of products: terrible product design, lack of accountability for activities, blatant false alarms in major software, numerous problems detecting the WildList set, and some horrendous instability under pressure. Happily, there were also some good performances to balance things out. John Hawes has the details.

Copyright © 2010 Virus Bulletin

Yes, I know. Windows Vista. Not the most lovable of platforms. It was released to a barrage of bad press, faced all kinds of criticisms, from slowness to insecurity to downright ugliness, and wasn’t really considered reliable until the release of service pack 2 – by which time Windows 7 was on the horizon promising more of the same only better. The VB test team had entertained vague hopes that, once Windows 7 was out, we might be spared the trauma of another Vista test, but considering the surprisingly large user base still maintained by the now outmoded operating system (estimates are that it still runs on over 20% of systems), it seemed a little premature to give up on it entirely. So, this may be one last hurrah for a platform that has caused us much grief and aggravation in the past.

After the massive popularity of the last desktop comparative (see VB, April 2010, p.23) we expected another monster haul of products, and sure enough the submissions came flooding in. Although not quite breaking the record, this month’s selection of products is still remarkably large and diverse, with the majority of the familiar old names taking part and a sprinkling of newcomers to provide some extra interest. Given what seems like a new, much higher baseline, we may have to consider revising our rules for free entry to these tests – as the situation currently stands, any vendor may submit up to two products to any single test without charge, and a small fee is levied on third and subsequent entries. This month only one vendor chose to submit more than two products, but in future it may become necessary to impose charges on any vendor submitting more than one product – which would at least provide us with extra funds for hardware and other resources involved in running these tests, and possibly allow us some time and space to work on new, more advanced testing.

Setting up of Vista is actually a fairly painless process these days, with the install media used including SP2 from the off and thus skirting round some of the problems encountered in our first few exposures to the platform. The installation process is reasonably simple, with just a little disk partitioning and so on required to fit in with our needs. Once up and running, no additional drivers were required to work with our new batch of test systems; after installing a few handy tools such as archivers and PDF viewers, and setting the networking and desktop layouts to our liking, images were taken and the machines were ready to go.

Several of the products entered this month required Internet access to update or activate. These were installed in advance, allowed to go online to do their business, and then fresh images were taken for later use. Test sets were then copied to the test machines.

This month’s core certification set was synchronized with the June 2010 WildList, which was released a few days prior to our official test set deadline of 24 July. The list was once again fairly unspectacular, with the bulk of the contents made up of social networking and online gaming data stealers. Of most note, perhaps, were three new strains of W32/Virut, the complex polymorphic virus which has been the bane of many a product over the last few years. These three were replicated in reasonably large numbers to ensure a thorough workout for the products’ detection routines; one of the three proved more tricky to replicate than the others, and credit is due to the lab team for getting our prototype automated replication system running in a way which could persuade it to infect a large enough set of samples for our needs. Including a few thousand samples of each polymorphic virus on the list, the WildList test set contained some 9,118 unique samples.

The other side of the certification requirements, the clean set, was updated and expanded as usual, with large swathes of new packages and package versions gathered from the most popular items on leading download sites, as well as additional items from CDs, DVDs and other media obtained by the lab in recent months. Among the items added were a number of tools related to television and other media-viewing hardware, and also quite a lot of items connected to document manipulation. In all, after a purging of some older and less relevant items, the clean set came in at close to 400,000 unique files.

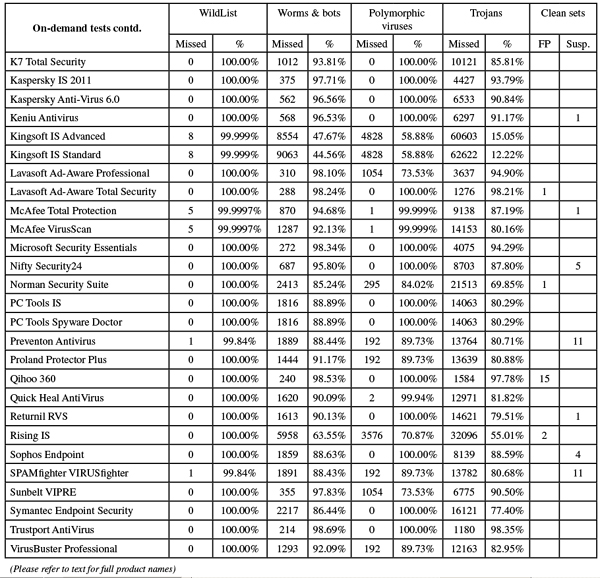

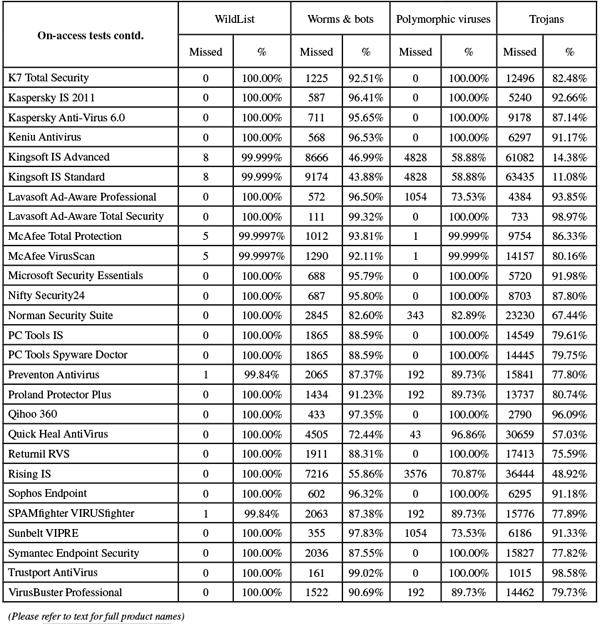

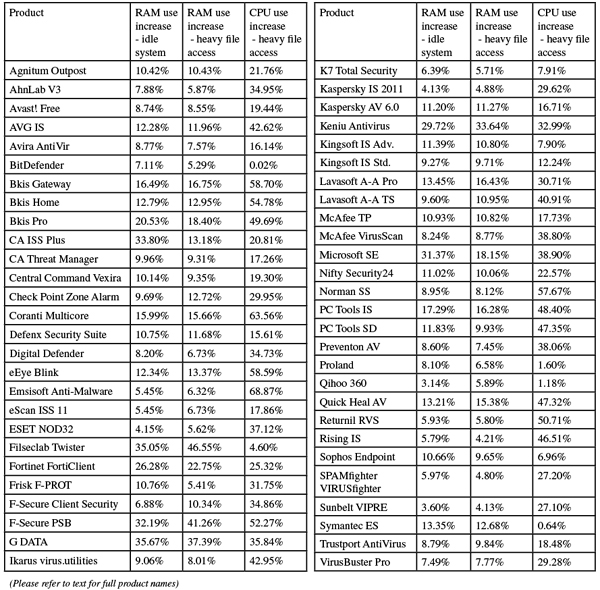

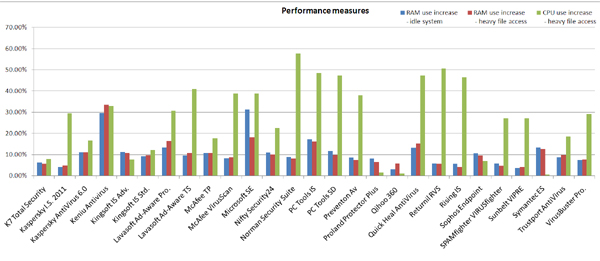

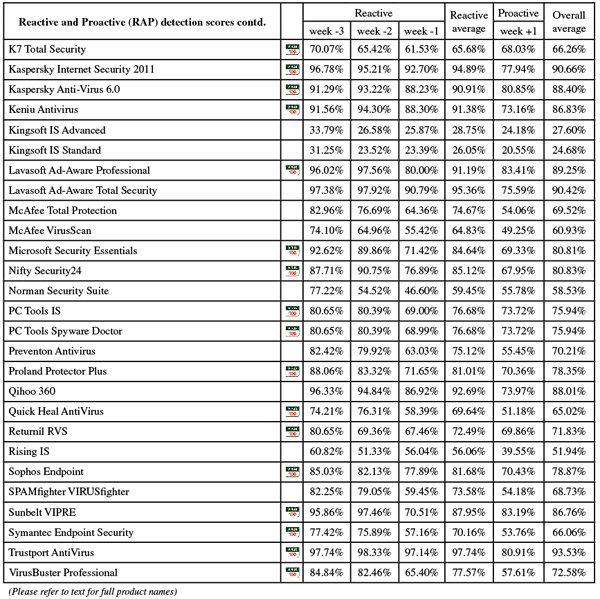

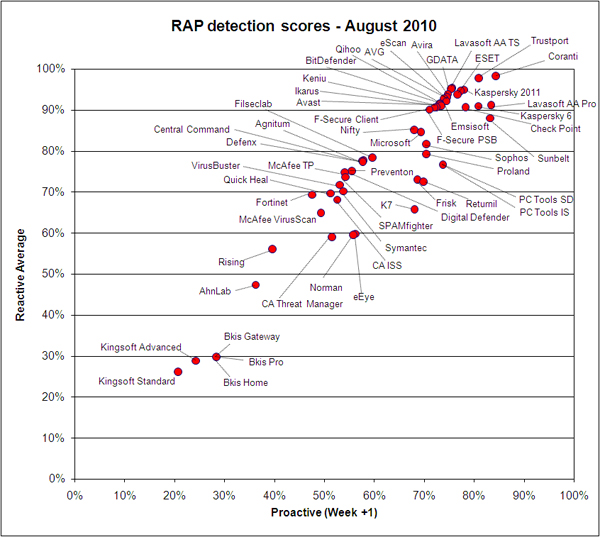

The speed sets were left pretty much unchanged from previous tests, and the measures of RAM and CPU usage we have been reporting recently were recorded as before. The other detection test sets were compiled as usual, with the RAP sets put together from significant items gathered in the three weeks leading up to the 24 July product deadline and the week following, and the trojans and worms & bots sets bulked up with new items received in the month or so between RAP sets. With further expansion of our sample-gathering efforts the initial numbers were fairly high, but every effort was made to classify and validate as much as possible prior to the start of testing, to keep scan times to a minimum. Further validation continued during testing and items not meeting our requirements were struck from the sets; in the end, the weekly RAP sets contained around 10,000 samples per week, with just over 70,000 in the trojans set and 15,000 in the worms and bots set. The polymorphic set remained largely the same as in previous tests, with the main addition being some older Virut strains which have fallen off the WildList of late, in expanded numbers.

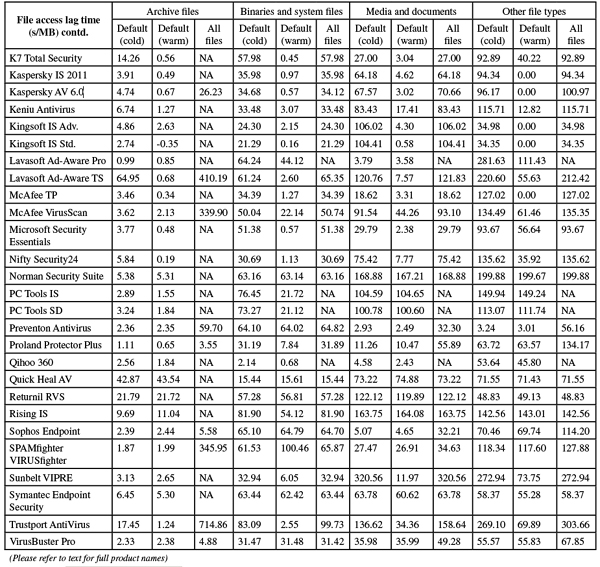

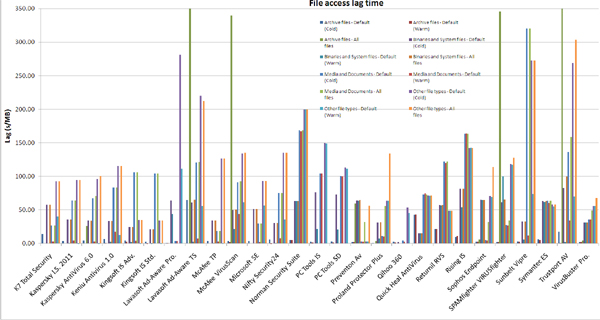

One other addition was made, to the set of samples used to measure archive scanning. This was enlarged slightly to include zipx format, a new and improved version of zip archiving used by the latest version of WinZip. We also included self-extracting executable archives compressed using the rar format. These samples are produced by adding the Eicar test file and a clean control file to an archive of each type. The first level archives are then added, along with another clean control file, to another archive of the same type to make the level 2 sample, and so on. The purpose of these is to provide some insight into how deeply each product analyses various types of compressed files, to help with comprehension of the speed results we report. Thus, if a product has a slow scanning speed but delves deeply into a wide range of archive types, its times and lags should not be directly compared with a product which analyses files less deeply. Another copy of the Eicar file is included in the test set uncompressed but with a randomly selected, non-executable extension, to show which products are relying on extension lists to determine what is scanned. This may have some effect on speeds over the other speed sets, notably the ‘media & documents’ and ‘other file types’ sets, which may include many files without standard executable extensions.

With all this copied to the secondary hard drives of the lab systems, we were ready to get down to some testing.

Agnitum’s Outpost is a fairly thorough suite solution which includes the company’s renowned firewall, an anti-malware component based around the VirusBuster detection engine, some proactive protection, web content filtering, spam blocking and more. Of course, only a subset of the protection provided by the suite is properly measured here. As a result of the defence in depth offered, running the 99MB pre-updated install package leads to an installation process which has rather more than the usual number of steps and takes a few minutes to get through; at the end a reboot is required to get everything settled into place, and on initial boot-up the system took a little longer than usual to return control as the various components were activated.

When we finally got access to the main interface we noted it looked slightly refreshed, with a more curvy and shiny feel, though much of this could have been the impact of Vista’s own shininess. We have long been fans of the simple and businesslike design and layout of the Agnitum GUI and it has lost none of its efficiency and solidity. With so many components here to control, the anti-malware configuration is fairly basic but offers the standard options in a lucid and logical style. Some caching of data meant that scanning of previously checked files was very speedy and although initial scanning speeds were medium, the ‘warm’ measures were much more impressive. RAM usage remained fairly steady – at the upper end of the middle of the scale – regardless of whether file access was taking place, with CPU usage similarly placed against other products in this month’s test.

We noted that the product appeared not to be looking inside the new zipx format archives, though it was not clear whether this was down to a lack of ability to decompress the format or the product merely not yet recognizing the extension as an archive type. Detection rates across the standard sets were respectable, and RAP scores started fairly respectably for older items, falling off fairly sharply in the newer sets. No problems were encountered in the clean or WildList sets, and Agnitum gets this month’s comparative off to a good start with a well-earned VB100 award.

AhnLab also provided an installer with the latest updates included, measuring just over 96MB, but this time the installation process was much faster and simpler. Only the basic steps of EULA, licensing, location and component selection were required, and within 30 seconds it was all up and running with no reboot necessary.

The interface seemed to have had some work done on it, looking quite pleasant in the glitzy surroundings of Vista, and with configuration controls easier to find and clustered in a more rational and logical fashion. Again, several additional components are provided besides the anti-malware basics, including modules labelled ‘network protection’, ‘content filtering’, ‘email protection’ and ‘system tuning’. One notable feature of the redesign is an end to the separation of scans for malware and spyware, although these continue to be logged separately.

Running through the performance test took some time, as the on-demand scanner has pretty thorough default settings; scan times were not unreasonable, but a lack of caching of results meant that repeat scans took as long as the first run. RAM usage was fairly low, and CPU drain not too heavy either, even under heavy stress, but lag times to access files were perhaps just a shade above average. The detection tests also proved somewhat drawn out: the initial on-access run suffered no problems but subsequent on-demand scans were hampered by the repeated appearance of on-access alerts, comprising the last thousand or so detections from the on-access test. These appeared every few minutes, over and over again even after rebooting the system, and were appended repeatedly to logs as well. The heavy toll of this odd behaviour may have contributed to a crash which occurred during one of the main test set scans, and also to the rather long wait for the logging system to export results.

In the end, however, full results were obtained, and the crashing problem was not repeated. We found fairly decent scores in the main test sets, and while AhnLab’s scores won’t trouble the leaders on the RAP quadrant, they were close to the main pack. Checking the scan results, we were worried at first that a handful of files in the WildList set had been missed on access, but looking more closely at the product’s logs showed that the items in question had in fact been detected and logged by the anti-spyware component. This claimed to have blocked access to them but had clearly not done so as thoroughly as the full anti-malware module would have done, as our opener tool was able to access and take details of the files in question. However, as the VB100 rules require only detection and not full blocking of malicious files this did not count against AhnLab, and with no false alarms in the clean sets, a VB100 award is duly earned.

Enough time has been spent in these pages drooling over the beauty of Avast’s latest version; suffice to say that after several appearances in our tests it has lost none of its lustre. The installer, a mere 51MB with all updates included, runs through in a handful of steps, one of which is the offer to install Google’s Chrome browser, and is all done in a few seconds with no need to reboot.

The change in the company name is clearly still filtering through, with some of the folders used for the install still bearing the old ‘Alwil’ name, but the main interface remains glistening, smooth and solid as a rock. On-demand scanning speeds were lightning fast, with no indication of any speed-up in the ‘warm’ scans but not much room for improvement over the cold times anyway. With feather-light impact on file access, and fairly sparing use of both memory and processor cycles, Avast does well in all the performance measures this month.

Detection rates across the sets were also pretty superb, and the WildList and clean sets presented no problems to the product, which brushed everything aside with aplomb. With extra kudos for prettiness, reliability and ease of testing, Avast comfortably wins another VB100 award.

AVG’s current flagship suite was also provided pre-updated, the installer measuring a fairly hefty 109MB. As another multi-faceted suite the installation process had several stages, including the offer of a Yahoo! toolbar, but was completed within a couple of minutes, ending with information on sending detection data back to base and an ‘optimization scan’, which was over in a minute or two; no reboot was required.

The product’s main interface has a fairly standard layout, which is a little cluttered thanks to the many modules, several of which are not covered by our standard tests. With some pretty thorough default settings on demand, initial scans took quite some time to complete, but judicious ignoring of previously scanned files – of certain types at least – made for some much faster ‘warm’ runs. File access slowdown was not too heavy, and RAM usage was well below that of the more draining products seen this month, while CPU usage was towards the middle of the scale.

Running through the detection tests was also a mix of fast and slow. One run – in which the scan priority was bumped up to get through the infected sets faster – zipped merrily to what appeared to be the end of the job, then lingered at ‘finishing’ for several more hours, while the number of detections continued to rise; it gave the impression that the ‘fast scan’ option simply sped up the progress bar, and not the actual scan time at all.

Despite this minor (and rather specialist) issue, everything completed safely and reliably, with some splendid detection rates across the sets, and RAP scores were particularly impressive once again. The WildList and clean sets were handled impeccably, and AVG also earns another VB100 award.

The paid-for version of Avira’s AntiVir came as a standard installer package of 46MB with additional updates weighing in at a smidgen under 35MB. The installation process is made up of the standard selection of stages, plus a screen advising users that they might as well disable Microsoft’s Windows Defender (although this action is not performed for you). The whole job was done in under a minute and no reboot was needed.

The main interface was another which seemed to have had a bit of a facelift, making it a little slicker-looking than previous versions, although some of the controls remain a little clunky and unintuitive, to us at least. The configuration system offers an ‘expert mode’ for more advanced users, which more than satisfied our rather demanding requirements, with a wealth of options covering every possible eventuality.

Speed tests tripped through in good time, with no sign of speeding up in the ‘warm’ scans but little need for it really, and performance measures showed fairly low use of both memory and CPU, with a pretty light touch on access too. Detection rates were as top-notch as ever, with excellent scores across all sets, RAP scores being especially noteworthy. No issues were observed in the WildList or the clean set, and a VB100 award is easily earned.

BitDefender’s latest corporate solution was delivered to us as a 122MB installer, which ran through the standard set of stages plus a step to disable both Windows Defender and the Windows Firewall in favour of the product’s own protection. The process was pretty speedy, although a reboot was required at the end to fully implement protection.

The interface – making its debut on our test bench – was plain and unflashy, with basic controls on the main start page and an advanced set-up area providing an excellent degree of configurability as befits the more demanding business environment. It all seemed well laid out and simple to navigate, with everything where it might be expected to be.

Running through some tests, however, showed that there may still be a few minor bugs that need ironing out. The heavy load of the detection tests brought up error messages warning that the ‘Threat monitor’ component had stopped working. By breaking the tests down into smaller sections we managed to get to the end though, and this problem is unlikely to affect most real-world users who are not in the unfortunate position of being bombarded with tens of thousands of malicious files in a short space of time.

Slightly more frustrating was an apparent problem with the on-demand scan set-up. This allowed multiple folders to be selected for a single scan; on several occasions it reached the end of the scan and reported results, but seemed only to have covered the first folder on the selected list. Again, re-running the tests in smaller chunks got around this issue quite simply.

On-demand scanning speeds were good, although in some of the scans hints of incomplete scanning were again apparent; the media & documents sets were run through in a few seconds, time and time again, but re-scanning different sub-sections produced notably different results, with one top-level folder taking more than two minutes to complete. On-access speeds were pretty fast, while RAM usage was tiny and CPU drain barely noticeable – several of the measures coming in below our baselines taken on unprotected systems. Meanwhile, detection rates were consistently high, with some splendid scores in the RAP sets. With the WildList dealt with satisfactorily, only the clean sets stood between BitDefender and another VB100 award. Here, however, things took an unexpected turn, with a handful of PDF files included in a technical design package from leading development house Corel flagged as containing JavaScript exploits. These false alarms were enough to deny BitDefender an award this month, and did not bode well for the many other products that incorporate the popular engine.

Once again Bkis submitted a selection of products for testing, chasing that elusive first VB100 after some close calls in recent tests. The first product on test is the Gateway Scan version, which came as a 165MB package including all required updates. The installation process is remarkably short and simple, with a single welcome screen before the lightning fast set-up, which even with the required reboot got the system protected less than half a minute after first firing up the installer. The main GUI is unchanged and familiar from previous entries, with a simple layout providing a basic selection of options.

On-demand speeds were fairly sluggish compared to the rest of the field, and the option to scan compressed files added heavily to the archive scanning time despite only activating scanning one layer deep within nested archives. This time was included in our graphs, in a slight tweak to standard protocol. File access times were very slow as well, and CPU and RAM usage well above average.

In the on-demand scans scores were a little weaker than desirable, with RAP results also fairly disappointing, but the WildList seemed to be handled properly. On-access tests were a little more tricky, with the test system repeatedly suffering a blue screen and needing a restart; this problem was eventually pinned down to a file in the worms & bots set, which seemed to be causing an error somewhere when scanned. The WildList set was handled smoothly, although initial checking seemed to show several Virut samples not logged; further probing showed that this was down to an issue with the logging sub-system trying to pump out too much information too quickly, and retrying brought together a full set of data showing complete coverage. On its third attempt, Bkis becomes this month’s first new member of the elite circle of VB100 award holders.

The home version of BKAV resembles the Gateway Scan solution in many respects, with another 250MB installer and a similarly rapid and simple set-up process. The interface is also difficult to tell apart, except for the colour scheme which seemed to be a slightly different shade of orange from the Gateway product.

Also similar were the slowish speeds both on demand and on access, with pretty heavy resource consumption. The problem with the on-access tests of the worms & bots set also recurred, although in this case we only observed the product crashing out rather than the whole system going down. Once again, logging issues were noted on-access, which were easily resolved by performing multiple runs. The logging system was added by the developers to fit in with our requirements, and real-world users would simply rely on the default auto-clean system. Scores were found to be generally close to those of the Gateway product, although with slightly poorer coverage of older and more obscure polymorphic viruses; the WildList and clean sets were handled very nicely, and a second VB100 is earned by Bkis.

The third of this month’s entries from Bkis is a ‘professional’ edition, but once again few differences were discernable in the install package or set-up process, the interface design, layout or the performance.

Scores – when put together avoiding problems with the logs – showed similar if not identical rates to the Gateway solution, and with an identical showing in the core certification sets Bkis makes it a clean sweep, earning a hat-trick of VB100 awards in a single go – surely some kind of record.

CA’s home-user product was provided as a 157MB installer package needing further online updates; the initial set-up process was fairly straightforward, with a handful of standard steps including a EULA, plus an optional ‘Advanced’ section offering options on which components to install. After a reboot, a quick check showed it had yet to update, so this was initiated manually. There were a few moments of confusion between two sections of the slick and futuristic interface – one, labelled ‘Update settings’, turned out to provide settings to be updated, while another, in a section marked ‘Settings’ and labelled ‘Update options’, provided the required controls for the updates. The update itself took a little over half an hour, at the end of which a second update was required.

The interface has a rather unusual look and feel and is at first a little tricky to navigate, but with some familiarity – mostly gained by playing with the spinning-around animated main screen – it soon becomes reasonably usable. With some sensible defaults, and a few basic options provided too, zipping through the tests proved fairly pleasant, with some remarkable speed improvements in the ‘warm’ scans on demand and a reasonable footprint on access. RAM usage while the system was idle was notably high, hinting at some activity going on when nothing else requires resources, but this effect was much less noticeable when the system was busy.

Detection tests brought up no major issues until an attempt to display the results of one particularly lengthy scan caused the GUI to grey out and refuse to respond – not entirely unreasonable, given the unlikelihood of any real-world user experiencing such large numbers of infections on a single system. Re-running scans in smaller chunks easily yielded results, which proved no more than reasonable across the sets.

In the clean sets, a single item from Sun Microsystems was flagged as potential adware, which is perhaps permissible under the VB100 rules, but in the WildList set a handful of items added to the most recent list were not detected, either on demand or on access, and CA’s home suite does not make the grade for a VB100 award this month.

The business-oriented offering from CA is considerably less funky and modern than its home-user sibling, having remained more or less identical since as long ago as 2006. The installer – which we have kept on file for several years – is in an archive of a complete installation CD which covers numerous other components including management utilities and thus measures several hundred MB. It runs through fairly quickly now with the benefit of much practice, but includes a number of stages including several different EULAs to scroll through. A reboot is needed to complete.

Once set up and ready, the interface is displayed by the browser and suffers a little from some suspect design decisions, with many of the options ephemeral and subject to frequent resetting. Buttons and tabs can be slow to respond, and the logging system is both awkward to navigate and easily overwhelmed by large amounts of information. However, scanning speeds are as impressive as ever, with low drain on resources and minimal slowdown on accessing files. Behaviour was generally good throughout, with no problems with stability or loss of connection to the controls. Detection results were somewhat poorer than the home-user product in some sets, however, and with a single WildList file missed CA fails to earn any VB100 awards this month.

Vexira was provided this month as a 55MB installer accompanied by a 66MB update bundle. The set-up process seemed to run through quite a number of steps but they were all fairly standard and didn’t require much thought to get through; with no reboot needed, protection was in place in under a minute. On firing up the interface, we recoiled slightly from the fiery red colour scheme, glowing brightly against the glittery Vista desktop, but its design and layout is familiar from the VirusBuster product on which it is closely modelled and we soon found ample and fairly accessible controls. Set-up of on-demand scans is perhaps a little fiddly, but after some practice the process soon became second nature.

Scanning speeds were pretty decent, especially in the archive set, with most archive types being scanned to some depth by default, although the new zipx format seemed to be ignored. On-access speeds were average overall, and rather slow in some types of files, but resource usage was generally fairly low. Detection rates were fairly decent across the sets, with a reasonable showing in the RAP sets, and with no problems in the WildList or clean sets Vexira makes it safely to another VB100 pass.

Check Point submitted its mid-range suite for this test, which was provided as a 133MB installer with an additional 68MB of updates in a fairly raw state for simply dropping into place. The installation process went through several steps, including the standard EULA and warnings about clashing with other products, as well as the offer of a browser toolbar and some set-up for the ‘auto-learn’ mode. On completion a reboot is required and a quick scan is run without asking the user. The design and layout are sober, serious and fairly easy to find one’s way around, and ample controls were provided, rendering testing fairly easy. Scanning speeds were excellent with the default settings – which are fairly light – and still fairly decent with deeper and more thorough scanning enabled. Resource consumption was not too high, and file access times not bad either.

In the detection sets we encountered a single issue where a scan seemed to hang. When trying to reboot the system to recover from this, another hang occurred at the logout screen, and the machine needed a hard restart. The problem did not recur though, and results were soon obtained, showing the usual excellent detection rates across the sets, with a pretty decent showing in the RAP sets. With no issues in the WildList or clean sets, Check Point comfortably regains its VB100 certified status.

Coranti’s multi-engine approach makes for a fairly hefty set-up/install process, with an initial installer weighing in at 45MB replaced with a whole new one of similar bulk as soon as it was run. With that done, online updating was required, and with an additional 270MB of data to pull down this took quite a lot longer to complete. Once this had finished, the actual install process was fairly fast and simple, but did require a reboot at the end.

Once up and running, we found the main interface reassuringly complete and serious-looking, with a thorough set of controls for the most demanding of users well laid out and simple to operate. Scanning speeds were rather slow, as might be expected from a solution that combines three separate engines, with long delays to access files, but usage of RAM and CPU cycles was lighter than expected.

The multi-engine technique pays off in the detection rates of course, with some scorching scores across the test sets, and the RAP sets particularly thoroughly demolished. The flip side is the risk of false positives, and this proved to be Coranti’s Achilles’ heel this month, with that handful of PDFs from Corel flagged by one of the engines as containing JavaScript exploits, thus denying the firm a VB100 award. This was made doubly sure by the fact that certain file extensions commonly used by spreading malware were not analysed on access.

Defenx is based in Switzerland and provides a range of tools tuned for the needs of the company’s local markets. The Security Suite is an adaptation of Agnitum’s Outpost, combining the Russian company’s well-regarded firewall technology with anti-malware centred around the VirusBuster engine. Despite the multiple functions, the installer is a reasonably lightweight 92MB with all updates included, but as might be expected it goes through a number of stages in the set-up process, many of which are associated with the firewall components. Nevertheless, with some quick choices the full process is completed in under a minute, and no reboot is required.

Controls are clearly labelled and easy to use, and tests ran through swiftly, aided by some nifty improvement in scanning times after initial familiarization with files. On-access speeds were not outstanding at first but also improved greatly, and resource consumption was fairly low. Detection rates in the main sets were decent, with the scan of the clean set producing a number of warnings of packed files but no more serious alerts; the WildList was handled without problems, and a VB100 is duly earned by Defenx.

Making its third consecutive appearance on the VB100 test bench, Digital Defender’s 47MB installer runs through the standard series of steps and needs no reboot to complete, the whole business taking just a few moments. The interface is clean and clear, providing an impressive degree of control for what appears on the surface to be a pared-down product. The product ran just as cleanly, causing no upsets through the tests and recording some respectable scanning speeds with a solid regularity but no indication of smart filtering of previously scanned items. File access lags were fairly low, and RAM usage notably minimal.

Detection rates were fairly decent in general, with no problems in the clean set, but in the WildList set a single sample of a W32/Ircbot variant was not detected, which came as a surprise, given the clean sheets of other products based on the same (VirusBuster) technology. Digital Defender thus misses out on a VB100 award this month.

Blink has long been a popular entrant in our comparatives, with its clean, neat design and interesting extras – notably the vulnerability monitoring which is its major selling point. The installation process has a few extras in addition to the standard steps, including the option to password-protect the product settings, which is ‘highly recommended’ by the developers for added security. An initial configuration wizard follows the install, with no reboot needed, which sets up the additional components such as a firewall (disabled by default), HIPS and ‘system protection’ features, many of which are not covered by our tests. The interface, like the set-up process, is displayed in a pleasant pastelly peach tone, and as usual we found it slick, smooth and clearly laid out.

Running through the speed tests proved rather a test of endurance, with the sandbox facility adding some serious time onto the on-demand scans – not so much ‘blink and you’ll miss it’ as ‘take forty winks while you wait for it to finish’. On-access times were just as sluggish, although RAM usage was no higher than many other entrants this month. In the infected sets detection rates were generally pretty decent. In the WildList set all was well, after some problems with W32/Virut in recent comparatives, but luck was not on eEye’s side, with a single sample in the clean set – part of a DVD authoring suite from major developer Roxio – labelled as a Swizzor trojan, and once again Blink is denied a VB100 despite a generally decent showing.

Having rebranded itself slightly to drop its former ‘A-Squared’ name in favour of a more directly relevant title, Emsisoft’s product has grown in slickness and clarity from test to test. The product was provided as a 103MB installer package, which we updated and activated online prior to commencing testing, although a fully updated package of roughly the same size was also provided later. The initial phase of the set-up followed the standard path, but some post-install set-up included activation options ranging from a completely free trial, via a longer trial process requiring submission of contact information, to fully paid licensing. An impressive range of languages were also offered. On completing installation, with no need for a reboot, the product offered to ‘clean your computer’, by which it meant perform a scan. On looking over the installed items, we noted the old ‘a2’ references still lurking in some paths, as is common in the early stages of a rebranding.

Initial testing was somewhat hampered by odd responses to our testing tools; some tweaking of settings made the product operate more in line with our needs, but it still disrupted the gathering of performance data, and we had to disable the behaviour blocker to get our scripts to run successfully. At one point during the simple opening of clean files and snapshotting of system performance information, the whole system locked up badly and had to be restarted using the power reset button. Eventually, however, we got some results which showed some very heavy on-access overheads on first visit. Scanning speeds increased massively in the ‘warm’ scans, and very little RAM but a lot of CPU cycles were used. On-demand speeds were fairly sedate in some sets, but reasonable in others, notably the media & documents set.

In the infected sets, scores were truly splendid across the board. The clean sets yielded a couple of alerts, one noting that the popular IRC tool MIRC is indeed an IRC tool, and the other pointing out that a tool for converting PDFs to Word documents – recommended by the editors of a popular download site in recent months – could be labelled a ‘PDF cracker’ and thus qualified as riskware. Both these alerts were comfortably allowed as only ‘suspicious’ under the VB100 rules, and Emsisoft only required a clean run through the WildList set to qualify for its first VB100 award. After an initial scare when some items appeared not to be spotted, rechecking of logs showed full coverage, and Emsisoft’s product joins the ranks of VB100 certified solutions, with our congratulations.

The latest offering from VB100 regular eScan has a pretty nifty set-up process from its sizeable 127MB install package, which is slowed down by the installation of some additional libraries, and includes a quick scan of vital areas during the process. Once up and running, the main interface is a delight to behold, with a series of icons along the bottom which enlarge when rolled over like the task panel on a Mac. This encouraged much playing among the lab team.

Having tired of this little pleasure, testing proceeded apace, helped by the ample configuration controls provided beneath the silky smooth front end. On-demand speeds were rather on the slow side, speeding up greatly on second viewing of known files, and on-access lags also seemed to benefit from familiarity, while RAM usage was surprisingly low. Detection rates, meanwhile, were very decent, with nothing to complain about in any of the main or RAP sets. The clean set scan included a few alerts on files marked as ‘Null:Corrupted’, which was no problem, but in the WildList set a single sample of W32/Koobface was missed, thus denying eScan a VB100 award this month.

ESET provided its current product, with all updates rolled in, as a very compact 40MB package. Installing ran as per usual, with information and options presented regarding taking part in the ThreatSense.net intelligence system, and the company’s trademark insistence on a decision regarding the detection of ‘potentially unwanted’ items. No reboot was needed to finish off, and the whole job was done in a minute or so.

Once installed, the familiar and much admired interface provided a great abundance of fine-tuning options in its many advanced set-up pages, with a good balance between simplicity on the surface and more technical completeness beneath. Once again, however, we noted that, despite what appeared to be options to enable it, we were unable to persuade the product to unpack archive files on access.

Generally, the product ran through the tests pretty solidly, but we did observe a few minor issues with the interface, with a number of scans reaching 99% and remaining there without ever reaching the end. A check of the logs showed that the scans had actually finished but hadn’t quite managed to display this fact properly. Also, in the most stressful portions of the on-access tests we saw some messages warning that the scanner had stopped working, but it appeared to be still running OK and no gap in protection was discernable.

Scanning speeds were pretty decent, especially given the thorough defaults on demand, and there were some reasonable and reassuringly stable file access times. Memory usage was among the lowest in this month’s comparative, with CPU drain not breaking the bank either. Detection rates were as excellent as ever, with some superb RAP scores; no issues emerged in the WildList or clean sets, and ESET continues its monster unbroken run of VB100 passes.

Twister has become a fairly familiar face in our comparatives, edging ever closer to that precious first VB100 award. The installer, 52MB with 26MB of updates, runs through quickly and easily with no surprises, ending with a call for a reboot. The interface is slick and serious-looking, with lots of controls, buttons, options and dialogs, but remains fairly simple to navigate after a little initial exploration.

Scanning speeds and on-access overheads were a bit of a mixed bag, with some fast times and some slower ones, depending on file types. Meanwhile, performance measures showed some very high memory consumption but not too much pressure on the CPU.

On-access scanning does not offer the option simply to block access to infected files, and logging seems only to function once files have been ‘cleaned’ – so we had to let the product romp through our sets, destroying as it went, which took quite some time. On-demand scans were much easier and more cooperative, and in the end some pretty decent scores were noted across the sets, with a solid showing in the RAP sets, declining slowly through the reactive weeks but with a steepish drop into week +1.

A couple of false alarms were produced in the clean sets, with the popular VLC video client being labelled as a TDSS trojan. The WildList set highlighted some problems with the complex Virut polymorphic samples, with a fair number missed, alongside a handful of the static worms and bots in the set. For now, that first VB100 award remains just out of reach for Filseclab.

Fortinet’s product came as a slender 9.8MB main package with an additional 150MB of updates. The set-up process was fairly routine, enlivened by noticing the full ‘endpoint security’ nomenclature (not mentioned elsewhere in the main product), and once again the option to install the full paid product or a free edition. Not having been provided with activation codes, we went for the free version, which seemed to meet our needs adequately. The set-up process had fairly few steps to go through and didn’t require a reboot to complete.

The product’s interface has remained pretty much unchanged for some time, and we have always found it fairly clearly laid out and intuitive. In the performance tests some fairly sluggish scanning times were not improved by faster ‘warm’ performances. Access lag times were pretty hefty, while use of system resources was on the heavy side too. Scans of infected sets proved even slower: a series of scheduled jobs set to run over a weekend overlapped each other despite what we assumed would be large amounts of extra time given to each scan. Returning after the weekend we found two of the scans still running, which eventually finished a day or two later. Another oddity observed was that the update package provided seemed to disable detection of the Eicar test file, which is used in our measures of depth of archive scanning. The sample file was detected with the original definitions in the base product however, so the data on compressed files was gathered prior to updating.

In the end we obtained a full set of data, showing some fairly respectable scores in most sets, with a good start to the RAP sets declining sharply in the more recent weeks. The WildList and clean sets presented no problems for the product, and a VB100 award is duly granted.

Frisk’s installer comes as a 25MB package, with an extra 48MB of updates. In offline situations these are applied simply by dropping the file into place after the installation process – which is fast and simple – and prior to the required reboot. Once the machine was back up after the reboot we quickly observed that the product remains the same icy white, pared-down minimalist thing it has long been.

The basic set of configuration options simplified testing somewhat, and with reasonable speeds in the scanning tests (impressively fast in the archive sets given the thorough defaults), lag times also around the middle of the field, and fairly low use of RAM but average CPU drain when busy, we quickly got to the end without problems. As in many previous tests, error messages were presented during lengthy scans of infected items warning that the program had encountered an error, but this didn’t seem to affect either scanning or protection.

Detection rates were pretty decent across the sets, with just a single suspicious alert in the clean sets and no problems in the WildList; Frisk thus earns another VB100 award.

F-Secure once again submitted two fairly similar products to this test. The first, Client Security, came as a 58MB installer with an additional updater measuring a little under 100MB. Installation was a fairly unremarkable process, following the standard path of welcome, EULA, install location, and going on to offer centralized or local install methods – implying that this is intended as a business product for installing in large networks. It all completed fairly quickly but required a reboot of the system to finish off.

The main interface is a little unusual, being mainly focused on providing status information, with the few controls relegated to a minor position at the bottom of the main window. For a corporate product it seems rather short on options. For example, it appears to be impossible to check any more than the standard list of file extensions on access – but it is possible that some additional controls may be available via some central management system.

Given our past experiences of the unreliable logging system, all large scans were run using the integrated command-line scanner. Nevertheless, we found the GUI fairly flaky under pressure, with the on-access tests against large infected sets leaving the product in something of a state, and on-demand scan options apparently left completely unresponsive until after a reboot. The scans of clean sets also appeared to be reporting rather small numbers of files being scanned, implying that perhaps large numbers were being skipped for some reason (possibly due to the limited extension list). On access, however, some impressive speeding up of checking known files was observed, hinting that the product may indeed be as fast and efficient as it seemed. Resource usage measures were fairly light.

Of course, many of the tests we run present considerably more stress than would be expected in a normal situation, but it is not unreasonable to expect a little more stability in a corporate-focused product. These issues aside, we managed to get to the end of testing without any major difficulty, and scanning speeds seemed fairly decent (if they can be trusted). Detection rates were unexceptionable though, with impressive scores across the board, and with no problems in the WildList or clean sets F-Secure earns another VB100 award.

The second of F-Secure’s products this month has a slightly larger installer, at 69MB, but uses the same updater and has a pretty similar set-up process and user interface. Speeds on demand were also similar, although on-access times were noticeably faster and resource usage considerably heavier – to the extent that we had to double-check the results columns hadn’t been misaligned with different products. Once again the main detection tests were run with a command-line scanner for fear of the logging issues noted in previous tests.

Detection rates were just as solid as the other product, with an excellent showing and no problems in the core certification sets, earning F-Secure a second VB100 pass this month.

G DATA’s latest version, fresh and ready for next year, came as a sizeable 211MB installer, including all required updates. The installation process went through quite a few steps, including details of a ‘feedback initiative’ and settings for automatic updating and scheduled scanning. A reboot was needed at the end.

The new version looks pretty good, with a simple and slick design which met with general approval from the lab team. Indeed we could find very little to criticize at all, although some delving into the comprehensive configuration system did yield the information that the on-access scanner ignores archives over 300KB by default (a fairly sensible compromise, which is most likely responsible for some samples in our archive depth test being skipped). At the end of a scan, the GUI insisted on us clicking the ‘apply action’ button, despite us having set the default action to ‘log only’, and it wouldn’t let us close the window until we had done this.

Scanning speeds were not too bad in the ‘cold’ run, and powered through in remarkable time once files had become familiar. Similar improvements were noted on access, with fairly slow initial checks balanced by lightning fast release of files once known to be safe, and resource usage was fairly sizeable but remained pretty steady through the various measures. Of course, detection rates were superb as always, with very little missed in the main sets and a stunning showing in the RAP sets. With everything looking so good, we were quite upset to observe a handful of rare false alarms in the clean sets – the same PDFs alerted on by one of the engine providers’ own products – which denied G DATA a VB100 award this month despite an otherwise awesome performance.

Ikarus returns to the VB100 test bench hoping for its first pass after a number of showings that have been marred by minor issues. The product was provided as usual as an ISO image of a CD installer, weighing in at a hefty 200MB with an additional 77MB of updates, but the initial set-up process is fast and simple. Post-install, with no reboot required, a few further set-up steps are needed, including configuring network settings and licensing, but nevertheless things are all up and running in short order. The .NET-style interface remains fairly simplistic, with few options and very little at all related to the main anti-malware functions, logging and updating taking up much of the controls.

Tests zipped through fairly nicely, with good speeds on demand but on-access times were a little on the slower side, and while CPU drain was above average, RAM usage was fairly low. As usual, the interface developed some odd behaviours during intense activity (on-demand scans provided no indication of progress, and our jobs could only be monitored by whether or not the GUI flickered and spasmed as a sign of activity). However, detection rates were solid and hugely impressive, as in several previous tests, with a particularly stunning showing in the RAP tests, and the WildList was handled impeccably despite the large numbers of polymorphic samples which have proven problematic in the past. The clean sets brought up a single alert, but as this warned that a popular IRC package was an IRC package and thus might prove a security risk, this was not a problem under the VB100 rules. After many sterling attempts, Ikarus thus finally earns its first VB100 certification – many congratulations are in order.

K7’s compact 52MB installer package runs through its business in double-quick time, with only a few basic steps and no reboot needed. Its interface is clear, simple and easy to navigate with a logical grouping of its various components, plenty of information and a pleasing burnt orange hue. Stability was generally solid, although the interface displayed some tendency to ‘go funny’ after more demanding scans – however, it recovered quickly and displayed no interruption in protection.

Scanning speeds were fairly average, and access lag fairly low, with very low resource usage. Detection rates in the main sets were pretty solid. RAP scores were slightly less impressive but still more than decent, and with no problems in the WildList and no false alarms in the clean set, another VB100 award heads K7’s way.

Kaspersky once again submitted a pair of products this month, with version 6 being the more business-focused of the two. The MSI installer package weighed in at a mere 63MB, although a multi-purpose install bundle was considerably larger, and installed fairly quickly and simply. A reboot and some additional set-up stages – mainly related to the firewall components – added to the preparation time.

Testing tripped along merrily for the most part, with some excellent speed improvements in the ‘warm’ scans, and on-access times were in the mid-range, with some very low RAM usage and slightly higher use of CPU cycles when busy. After large on-demand scans, logging proved something of an issue – large logs were clearly displayed in the well-designed and lucid GUI, but apparently impossible to export; a progress dialog lurked a small way in for several hours before we gave up on it and retried all the scans in smaller chunks to ensure usable results. These scores in the end proved thoroughly decent across the board, with no issues encountered in handling the required certification sets, and a VB100 award is granted to Kaspersky Lab’s version 6.

Update: please see 'update' section and revised tables below.

Excited to see the 2011 edition of Kaspersky’s product, we quickly started work on the 103MB installer, with its own similarly large update bundle, which tripped along quickly with no need to reboot. The new interface sports a rather sickly pale-green hue and has some quirks, but is mostly well thought out and simple to use once it has become familiar.

Again scanning speeds were helped by excellent caching of known results – slightly slower initially than the version 6 product and with not quite as remarkable speed-ups on second viewing of files, but on-access times were fairly similar, and in the performance measures CPU use had decreased notably at the expense of a small increase in memory use. In the infected sets some considerable wobbliness was observed, with whiting-out and crashing of the interface during scanning and once again some difficulties saving logs. Detection scores were superb however, with very little missed, and with no problems in the core sets Kaspersky earns a second VB100 award this month.

Update: please see 'update' section and revised tables below.

Another newcomer to our tests, Chinese developer Keniu provided version 1 of its product. An install package labelled ‘beta’ and some as yet untranslated areas of the interface (notably the EULA) hinted that the product is at a fairly early stage of development. Based on the Kaspersky engine, the product was provided as a 76MB install package, which ran through simply and, despite a 20-second pause to ‘analyse the system’, was all done in under a minute, no reboot required.

The interface is simple, bright and breezy, with large buttons marking the major functions and a limited but easy-to-use set of options. On-demand speeds were fairly slow, but on-access times not bad at all, while performance measures were fairly hefty. In the detection sets we had a few issues, with logs trimmed to miss off the ends of longer file paths, despite the GUI claiming to have exported logs ‘completely’. In the on-access tests we noticed the product getting heavily bogged down if left going for too long. Files seemed to be being processed extremely slowly after being left over a weekend and detection had clearly stopped working some time and several thousand samples before we eventually gave up on it. By re-running this in smaller chunks, and piecing logs back together based on unique elements in the paths, we were able to obtain some pretty decent results, as expected from the quality engine underlying things. Without any problems in the clean sets and with a clear run through the WildList, Keniu earns its first VB100 award despite some teething problems when the product is put under heavy stress.

Kingsoft returns to the fray with its usual pair of near-identical products. The ‘Advanced’ edition was provided fully updated as a 51MB installer package, which zipped through quickly and simply and needed no reboot to complete. Controls are similarly simple and quick to navigate and operate, and what the product lacks in glossy design it usually makes up for in generally good behaviour. Speed tests were no more than reasonable, with no sign of advanced caching of known results; RAM usage was fairly low though, and CPU use even lower, with some fairly light impact on file accessing too. On a few occasions some dents in the smooth veneer were observed, notably when scanning the system drive, it seemed to get stuck scanning a constantly growing log, and a single file in the RAP sets seemed to trip the product up too, bringing scan runs to an abrupt halt with no notification or information.

Detection rates were generally fairly meagre, and although there were no false positives in the clean set, some of the many replicated samples of W32/Virut in the WildList set proved too much, and with a fair number of misses representing one strain Kingsoft’s ‘Advanced’ edition is denied a VB100 award this month.

With identical versioning and appearance, but a slightly smaller installer (at 46MB), Kingsoft’s Standard edition is pretty hard to tell apart from the Advanced version. The set-up process, user experience and scanning speeds were all-but-identical to those of its sibling; performance measures were closely matched too.

Detection rates were similarly on the low side, with the same issues in the system drive and RAP sets. Once again, there were no false positives generated in the clean set, but again a handful of Virut samples went undetected in the WildList set, meaning that no VB100 award can be granted.

The first of a pair of products from Lavasoft, this one is the company’s standard product, closely resembling those entered in a few previous comparatives. The fairly large 137MB installer came with all required updates and installed fairly rapidly, although one of the screens passed through along the way seemed to be entirely gibberish. It also offered to install Google’s Chrome browser, but skipping this step got the whole install completed in under a minute, including the required restart.

The interface was more or less unchanged from when we have encountered it on previous occasions – like several others this month it is starting to look a little plain and old-fashioned in the glitzy surroundings of a trendy modern desktop environment. However, it proved reasonably simple to navigate, providing a bare minimum of options and with a rather complex process for setting up custom scans; most users will be satisfied with the standard presets provided.

Some reasonable speeds were recorded in the clean sets, with fairly slow scan times and above average resource use, while the light on-access overheads can in part be explained by the limited range of items being checked. In the infected sets things were a little more troublesome, with scans repeatedly stopping silently with no error messages, the GUI simply disappearing as soon as we turned our backs. Fortunately, details of progress were being kept in a log entitled ‘RunningScanLog.log’, and after making numerous, ever-smaller runs over portions of our sets we eventually gathered a pretty much complete picture. Piecing this back together, we found no issues in the clean sets, and the WildList was handled well on demand. Running through again on access, however, we saw a handful of items being allowed to be opened by our testing tool. Investigating this oddity more closely, we found that these items had been noted in the product log, and promptly deleted when the product was set to auto-clean, thus making for a clean showing and earning Lavasoft its first VB100 award, despite a few lingering issues with stability under heavy stress.

The second of Lavasoft’s offerings this month came as something of a surprise, the bulky 397MB installer marking it out immediately as a somewhat different kettle of fish from the standard solution. The set-up runs through a large number of steps, including an initial check for newer versions and the option to add in some extras, including parental controls and a secure file shredder, which are not included by default. A reboot is needed to complete, and then it spends a few moments ‘initializing’ at first login.

All of this had a vaguely familiar air to it, and once the interface appeared a brief look around soon confirmed our suspicions, with the GUI clearly based on that of G DATA – even down to the G DATA name appearing in a few of the folders used by the product. Testing zipped through, helped as expected by some very impressive speed improvements over previously scanned items; the only thing slowing us down was a rather long pause opening the browser window for on-demand scans, but as regular users wouldn’t be running lots of small scans one after the other from the interface this is unlikely even to be noticed by the average user.

Detection rates are more important though, and the product stormed through our sets leaving an awesome trail of destruction. However, once again those pesky PDF files from Corel were alerted on in the clean set, and this stroke of bad luck keeps Lavasoft from picking up a second VB100 this month.

McAfee’s home-user product has taken part in a few comparatives of late, but familiarity hasn’t made it any more popular with the lab team. The installation process is entirely online, with a tiny 2.9MB starter file pulling down all the required components from the web. This amounted to some 116MB and took a good five minutes to pull down; it installed with reasonable promptness and without too much effort. No reboot was needed to get protection in place. The interface manages to be ugly and awkward to operate, heavily text-based and strangely laid out. Messaging was somewhat confusing, with some areas of the interface seeming to imply that on-access protection was disabled, while others claimed it was fully operational.

Very few options are provided for the user, and those that are available are hard to find, so our few simple needs (including recording details of the changes made to our system by the product) had to be implemented by means of adjustments in the registry. The ‘custom’ option for the on-demand scanner only provided options to scan whole drives or system areas, so our on-demand speed measures were taken using right-click scanning. Scanning speeds proved fairly reasonable, with some signs of improvement when scanning previously checked items, while on-access speeds seemed fairly zippy and resource usage was quite light.

The product crashed out after some of the larger scans of infected sets, leaving no information as to why, and of course having overwritten its own logs. We also noted that some Windows settings seemed to have been reset – notably the hiding of file extensions, which is one of the first things we tweak when setting up a new system. On several occasions this was found to have reverted to the default of hiding extensions, which is not only a security risk but also an activity exhibited by some malware.

In the clean sets a VNC client was correctly identified as a VNC client, and in the infected sets scores were generally pretty decent – this would most likely be improved by the product’s online lookup system, which is not available during the test runs. In the WildList set, however, a selection of Virut samples were not detected, and as a result no VB100 award can be granted to McAfee’s home-user offering this month.

Leaving these troubles behind with some relief, we come to McAfee’s corporate product which has long been popular with the lab team for its dependability, sensible design and solidity. It kicks off its installer with an offer to disable Microsoft’s Windows Defender, which worried us a little with its lack of details on the source of the offer. The rest of the installation process, running from a 26MB installer and a 77MB update file, was fast and easy, with a message at the end stating that a reboot was only required for some components, the core protection parts being operational from the off. The GUI remains grey and drab but easy to use, logically laid out and oozing trustworthiness, providing a splendid depth of configuration (ideal for a business environment).

Scanning speeds were decent with the default settings and not bad with more thorough scanning enabled, while on-access times also went from very fast to not bad when turned up to the max. RAM usage was reasonable too, with CPU use no higher than most this month. Detection rates were generally good, with some fairly decent RAP scores, and the clean sets were handled without issue, but again that small handful of W32/Virut samples were not detected and McAfee’s business solution also misses out on a VB100 award this month.

Microsoft’s free solution was provided as a tiny 7MB installer with an impressively slim 55MB of updates, and installs rapidly and easily, in a process only enlivened by the requirements of the ‘Windows Genuine Advantage’ programme. No reboot is needed, and with the clear and usable interface providing only basic controls, testing progressed nicely. Scanning speeds were unremarkable, with some slowish measurements on demand but fairly decent file access lags, while resource usage seemed rather on the high side. Detection rates were strong as ever, with a good showing in the RAP sets.

With no problems in the WildList or clean sets, Microsoft earns another VB100 award with ease.

Nifty returns to the VB100 line-up to test our testing skills to the extreme. Once again untranslated from its native Japanese, and with much of the script on the interface rendered as meaningless blocks and question marks in our version of Windows, navigation has never been simple, and is not helped by a somewhat eccentric interface layout.

Installation of the 178MB package (which included the latest updates) was not too tricky, with what seemed to be the standard set of stages for which the ‘next’ button was easy to spot. One screen was rather baffling however, consisting of a number of check boxes marked only with question marks, except for the words ‘Windows Update’. We soon had things up and running though, after an enforced reboot and, aided by previous experience and some tips from the developers, got through the test suite fairly painlessly.

Scanning speeds were pretty decent, helped out by the zippy ‘warm’ scans which are a trademark of the Kaspersky technology underlying the product, while resource usage was fairly average. The on-access detection tests went pretty smoothly, but the on-demand scans of infected sets took rather a long time. Logging seemed only to be available from the Windows event viewer, which of course had to be set not to abandon useful information after only a few minutes of scanning. Over time, large scans of infected sets seemed to slow down to a snail’s pace, and with time pressing, RAP scores could only be gathered on access. This may result in some slightly lower scores than the product is truly capable of, with additional heuristics likely to come into play on demand to explain the slowdown, but when less than a third of the sets had been completed after five days, it seemed best to provide at least some data rather than continue waiting.

Eventually results were gathered for the main sets, which showed the expected solid coverage, with no more to report in the clean sets than a couple of ‘suspicious’ items (the usual VNC and IRC packages alerted on as potential security risks). The WildList was handled without issues either, and Nifty comfortably adds to its growing tally of VB100 passes.

Norman’s suite came as a 77MB package with all updates included, and only took a few steps and a reboot to get working, although some further set-up was needed after installation. The interface provides a basic range of options, and does so in a rather fiddly and uncomfortable manner for our tastes, but is generally well behaved and usable. On-demand scanning speeds were distinctly slow compared to the rest of the field – presumably thanks to the sandbox component’s thorough investigation of the behaviour of unfamiliar executables. Similar slowness was noted in the on-access measures, matched by high use of CPU cycles, although RAM use was not excessive.

Running the high-stress tests over the infected sets caused some problems with the GUI, which lost its links to the on-demand and scheduled scanning controls several times, denying they were installed despite them continuing to run jobs. Protection seemed to remain solid however, and detection was reasonable across the board, but in the clean set a false alarm appeared, with part of Roxio’s DVD authoring suite once again being labelled as a Swizzor trojan, thus denying Norman a VB100 once again.

PC Tools provided its full suite solution as a 134MB installation package, which runs through with reasonable speed and simplicity, warning at the end that a reboot may be required in a few minutes. After restarting, the interface is its usual self, looking somewhat drab and flat against the glossy backdrop of Vista’s Aero desktop. The layout is somewhat different from the norm, with a large number of sub-divisions to the scanning and protection, and in a quick scan of the system partition it was the only product to raise an alert – reporting a number of cookies found (each machine paid a brief visit to MSN.com to check connectivity prior to activation of the operating system, and presumably the cookies were picked up at this point).

Having grown familiar with the product during the process of a standalone review recently (see VB, July 2010, p.21), we suddenly found the layout a little confusing once again, failing to find some useful options and ending up having to let the product delete, disinfect and quarantine its way through the sets. Nevertheless, results were obtained without serious problems, and scanning speeds proved fairly average but fast over the important executables sets, with similarly lightish times in the on-access speed measures and reasonably high use of RAM and CPU cycles.

Detection rates were solid, with a very steady rate in the RAP sets, and with no issues handling the clean or WildList sets PC Tools earns a VB100 award without difficulty.

The second of PC Tools’ offerings this month was pretty similar to the first, but lacked a firewall and some other functions, and notably had some components of the multi-faceted ‘Intelli-guard’ system disabled in the free trial mode. The core parts, including standard filesystem anti-malware protection, were fully functional however. The installer was thus slightly smaller at 129MB, but the set-up process was similarly quick and to-the-point, with no restart needed this time.

The interface proved no problem to navigate – perhaps thanks to practice gained testing the previous product. We felt that a little was left to be desired in the area of in-depth options but everything we needed was to hand. Having become rather exhausted by the unending barrage of products by this point, we welcomed a little light relief in the form of the product’s cartoon logo – in which we have noted before that the ‘Doctor’ actually resembles a large glass of cold beer more than anything. One other quirk of the product is that in order for it to keep its detection logs intact it needs to be disconnected from any networks, otherwise it tries to send them out into space for feedback purposes.

The tests tripped along merrily without upset or any sign of instability even under heavy pressure, and again performance measurements were fairly decent if not exceptional. Likewise, detection rates were solid and reliable without being flashy. With no problems in the core certification areas, a second VB100 goes to PC Tools this month, along with our gratitude for a relatively painless testing experience.

Preventon’s compact 48MB installer zips along rapidly, a highlight being the extensive list of available languages including Danish and Dutch. No reboot is needed to get things up and working. The now-familiar interface is delightfully simple and packs a good level of controls into a small area, although much of this is available only to ‘pro’ users, with a fully licensed product.

On-demand speeds were somewhat mediocre, but file access lag times were not too intrusive, while RAM use was medium and CPU slightly above average. Detection rates were decent in general, with no false alarms; however, a single Ircbot sample in the WildList set was not detected, and Preventon therefore misses out on a VB100 award this month.

Proland’s 69MB install package goes about its business in a short and sweet fashion, the brief set-up process enlivened by the unusual but thoughtful offer to add a support contact to the Windows address book. Protection is in place in short order with no reboot required, and the main interface is bright and brisk with a nice simple layout that makes for easy use.

Scanning speeds were reasonable, with decent on-access speeds and remarkably low resource usage, with CPU drain barely registering. Detection rates were similarly decent, closely matching those of other products based on the VirusBuster engine. With the clean set handled nicely and no issues with the WildList item which upset a few fellow users of the VirusBuster engine, Protector Plus proves worthy of another VB100 award.

Update: please see 'update' section and revised tables below.

Qihoo has had a clean run in its first couple of VB100 entries, and returns this month hoping for a third award. The product, kindly translated into English after an initial entry which only supported the company’s native Chinese, came as a 91MB install package with all updates included, and was set up in no time, with a bare minimum of steps, few options to tax the brain and no reboot needed. On opening the interface, options to make use of online ‘cloud’ protection and to join a feedback system are offered. Users are also urged to install ‘360 Security Guard’ for additional protection.

The interface itself is smooth and simple with a sensible, logical layout, and provides a good level of configuration controls. Scanning speeds were not hugely impressive, but at least consistent, but on-access times were very swift and resource consumption very low. By default, the product only monitors ‘applications’ on access, and despite enabling an option to scan all files we saw no detection of the Eicar test file with a randomly chosen extension. Detection rates were solid across the board, with some excellent RAP scores, and the WildList caused no problems, but in the clean sets once again a selection of items, all contained in a single install package from Corel, were flagged as containing PDF exploits, spoiling Qihoo’s run of good fortune and denying the vendor a VB100 award this month.

Quick Heal’s solution has grown from once minimal size to a mid-range 105MB, including all updates, but still zips into place with only simple, unchallenging queries and no reboot needed. The interface is a little confusing in places, but generally fairly easy to navigate. Scanning speeds, once remarkable, were this month no more than decent, with file access lags and resource consumption higher than expected, and some serious delays were imposed when trying to export larger scan logs.

Results showed some decent scores, with on-demand detection rates notably better than on access, and RAP scores were not bad either; no issues cropped up in the WildList set or clean sets, and a VB100 award is duly granted to Quick Heal.

Returnil has been working up to inclusion in a VB100 comparative review for a few months now, and we’ve looked forward to finally welcoming the vendor to the fold. The product, as hinted at by its title, is based on a virtualization system which allows the user to revert the system to a clean state at the click of a button – an interesting and unusual offering which we look forward to looking at in more depth in due course. For now, we mainly looked at the anti-malware component included as an extra, which is based on the Frisk engine.

The installer is 36MB, with an additional 22MB of updates, and runs through quite simply, with just a few simple steps and a reboot to complete. Once in place, a button lurks on the desktop to control the virtualization component. The anti-malware component is provided with a few basic options, which proved ample for our needs. It is attractive, lucid and well designed, and seemed to maintain a good level of stability during our stressful tests – although at one point in a large scan of the infected sets a crash was observed, with a warning that the Data Execution Prevention sub-system had prevented some unwanted activity; the machine had to be restarted to get things back to normal.

Pushing on with the tests, we witnessed some reasonable scanning speeds in the on-demand tests, and file access lags and CPU use were a little on the high side, though memory use was no more than average. In the infected sets, scores were generally pretty decent, with a remarkably steady rate in the later three weeks of the RAP sets. No problems were seen in the WildList or clean sets, and Returnil’s interesting and unusual product proves worthy of a VB100 award.

Rising has had a rather sporadic and unpredictable history in VB100 testing over the past few years, with an on-off pattern of entries and a similarly up-and-down record of passes and fails. The latest product came as a mid-sized 84MB package, and installation was reasonably painless, with a few standard steps plus questions about allowing Windows services to access the local network. After a reboot, some further configuration is provided in wizard format, with a choice of skin for the GUI notable among the options (the team selected ‘Polar Dust’, a pleasant black-and-blue design).

Scanning speeds were fairly mediocre, on-access lag times were heavy and CPU use was also high, although memory was not overused. A few issues were encountered in the infected sets, with a message appearing during the biggest scan warning that ‘ravmond’ had stopped working – this did not appear to have affected the running scan however, and protection seemed to still be in place (or at least to have come back on again by the time we checked). When running the highly stressful on-access scan over the infected sets another issue emerged, with the whole window system turning milky white and refusing to respond; a hard reboot was needed to recover. Also adding to our problems, blocking of access does not operate in the standard manner, and the logs had to be checked to measure detection of items which our tools had been allowed to open.

Looking over the results, scores were not hugely impressive in most sets, though fairly even at least in the reactive portions of the RAP sets. In the WildList a handful of items were missed (oddly, different ones in on-demand and on-access checks). In the clean sets, a pair of false alarms were also noted, with ‘Generic Trojan’ alerts raised against the rather obscure software packages named Photoshop and Acrobat. Rising is thus denied a VB100 award this month.

Sophos provided its latest version just in time for this test, with additional components in this version including ‘cloud’-based scanning and much else not covered by our current testing regime. The 81MB installer was accompanied by only 4MB of updates, and ran through in decent time, a moment of interest being the offer to remove ‘third-party’ (meaning competitor) products. No reboot was needed to get going, and initial tests sped through rapidly, with excellent on-demand scan times only dented by turning up archive scanning. Similarly rapid results were obtained in the on-access tests, with pretty low resource usage.